The above image and the following article content are from the internet. If there is any infringement, please contact us for deletion.

AI Neuroscience: New Literature on Neural and Cognitive Research – November 26, 2024 (Frontal Cortex, Retinal Neurons to Working Memory)

AI Neuroscience: New Literature on Neural and Cognitive Research – November 24, 2024 (One from Nature, One from Music)

AI Neuroscience: New Literature on Neural and Cognitive Research – November 23, 2024 (Two Papers from Nature)

December 11, 2024 Total 8 Articles

Deep Predictive Coding Networks Partly Capture Neural Signatures of Short-Term Temporal Adaptation in Human Visual Cortex

Gradient Diffusion: Enhancing Multicompartmental Neuron Models for Gradient-Based Self-Tuning and Homeostatic Control

QuantFormer: Learning to Quantize for Neural Activity Forecasting in Mouse Visual Cortex:

Primary Visual Cortex Contributes to Color Constancy by Predicting Rather than Discounting the Illuminant: Evidence from a Computational Study

Speaker Effects in Spoken Language Comprehension

Neural Mechanisms Underlying the Effects of Cognitive Fatigue on Physical Effort-Based Decision-Making

Reduced and Redundant: Information Processing of Prediction Errors During Sleep

Cell Types Associated with Human Brain Functional Connectomes and Their Implications in Psychiatric Diseases

[1]

Deep Predictive Coding Networks Partly Capture Neural Signatures of Short-Term Temporal Adaptation in Human Visual Cortex,《Deep Predictive Coding Networks Partly Capture Neural Signatures of Short-Term Temporal Adaptation in Human Visual Cortex》

Amber Marijn Brands, Paulo Ortiz, Iris Isabelle Anna Groen

Abstract

Predictive coding is a leading theory of cortical function, which assumes that the brain continuously predicts incoming sensory stimuli using a hierarchical network of top-down and bottom-up connections. This theory is supported by previous work showing thatPredNet, a deep learning network designed according to predictive coding principles, exhibits several features of common neural responses in primate visual cortex. However, a ubiquitous neural phenomenon that has not been studied is short-term visual adaptation: when exposed to prolonged or directly repeated static visual input, neural responses adjust over time. Here, we examine whetherPredNet exhibits two neural features of temporal adaptation observed in intracranial recordings from human participants watching prolonged and repeated stimuli (Brands et al.,2024). We find that, like the human visual cortex, PredNet adapts to static images, with subadditive temporal response summation demonstrating this: when the duration of the stimulus is prolonged, the non-linear accumulation of response amplitude is produced by seemingly reasonable transient persistent dynamics of the neurons during activation time. However, PredNet activation also shows systematic responses to stimulus shifts, which are not present in human neural data. For repeated stimuli, PredNet exhibits slight response suppression to any two images presented rapidly in succession but no repetition suppression, with the same image pair’s responses being reduced relative to responses to different images, which is strongly observed across the human visual cortex. We show that these results are stable across multiple training datasets and two different types of loss calculations. Finally, we find a relationship between temporal adaptation and visual input properties in both PredNet and neural data, suggesting that temporally sustained activity is enhanced for more complex scenes containing clutter. In summary, these results suggest that the temporal dynamics observed in PredNet are only partially consistent with neural data and are associated with low-level properties of visual input rather than high-level predictions generated by top-down processes.

doi: https://doi.org/10.1101/2024.12.06.627148

[2]

Gradient Diffusion: Enhancing Multicompartmental Neuron Models for Gradient-Based Self-Tuning and Homeostatic Control,《Gradient Diffusion: Enhancing Multicompartmental Neuron Models for Gradient-Based Self-Tuning and Homeostatic Control》

Lennart P. L. Landsmeer, Mario Negrello, Said Hamdioui, Christos Strydis

Abstract

Realistic brain models contain numerous parameters. Traditionally, non-gradient methods have been used to estimate these parameters, but gradient-based methods have many advantages, including scalability. However, brain models are associated with existing brain simulators, which do not support gradient computation. Here, we demonstrate how to extend these neural models within the public interface of such simulators to also compute gradients for their own parameters. We show that the computed gradients can be used to optimize a biophysically realistic multicompartmental neuron model using a gradient-based Adam optimizer. Beyond parameter adjustment, gradient-based optimization methods may pave the way for dynamic learning and homeostatic control in simulations.

https://arxiv.org/pdf/2412.07327

[3]

QuantFormer: Learning to Quantize for Neural Activity Forecasting in Mouse Visual Cortex,《QuantFormer: Learning to Quantize for Neural Activity Forecasting in Mouse Visual Cortex

Salvatore Calcagno, Isaak Kavasidis, Simone Palazzo, Marco Brondi, Luca Sità, Giacomo Turri, Daniela Giordano, Vladimir R. Kostic, Tommaso Fellin, Massimiliano Pontil, Concetto Spampinato

Abstract

Understanding complex animal behavior depends on interpreting neural activity patterns within brain circuits, making the ability to predict neural activity crucial for building brain dynamics forecasting models. This ability holds significant value in neuroscience, especially in applications such as real-time optogenetic interventions. While traditional encoding and decoding methods have been used to map external variables to neural activity and vice versa, they focus on interpreting past data. In contrast, neural forecasting aims to predict future neural activity, which is a unique and challenging task due to the spatiotemporal sparsity and complex dependencies of neural signals. Existing transformer-based predictive methods, while effective in many domains, struggle to capture the unique properties of neural signals characterized by spatiotemporal sparsity and complex dependencies. To address this challenge, we introduce QuantFormer, a transformer-based model specifically designed for predicting neural activity from two-photon calcium imaging data. Unlike traditional regression-based methods, QuantFormer reformulates the prediction task as a classification problem through dynamic signal quantization, enabling it to learn sparse neural activation patterns more effectively. Furthermore, QuantFormer addresses the challenge of analyzing multivariate signals from arbitrary numbers of neurons by incorporating specific neuronal labels, allowing scalability across different neuronal populations. Through unsupervised quantization training on the Allen dataset, QuantFormer sets new benchmarks for predicting mouse visual cortex activity, demonstrating robust performance and good generalization across various stimuli and individuals, paving the way for foundational models of neural signal prediction.

https://arxiv.org/pdf/2412.07264

[4]

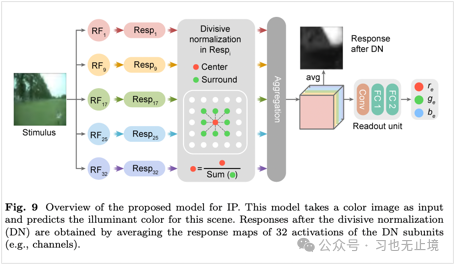

Primary Visual Cortex Contributes to Color Constancy by Predicting Rather than Discounting the Illuminant: Evidence from a Computational Study,《Primary Visual Cortex Contributes to Color Constancy by Predicting Rather than Discounting the Illuminant: Evidence from a Computational Study》

Shaobing Gao, Yongjie Li

Abstract

Color constancy (CC) is an important ability of the human visual system, which allows for stable perception of the color of objects even when the color of the light illuminating them varies significantly. Although increasing evidence from the field of neuroscience suggests that multiple levels of the visual system contribute to the realization of color constancy, the role of the primary visual cortex (V1) in color constancy is not yet fully understood. Specifically, the role of double-opponent (DO) neurons in V1 is believed to contribute to some degree of color constancy, but the computational mechanisms remain unclear. We constructed a neurophysiological model of V1 based on natural image datasets, using real light sources as labels to learn the color of the illuminant. Through qualitative and quantitative analysis of the learned model’s neuronal response characteristics, we found that the spatial structure of the receptive fields and color weights of the learned model’s neurons are quite similar to those recorded in V1 for simple and double-opponent neurons. From a computational perspective, in terms of illuminant prediction, DO cells in V1 perform more robustly than simple cells. Therefore, this work provides computational evidence supporting the idea that DO neurons in V1 achieve color constancy by encoding the illuminant, which contradicts the assumption that V1 facilitates color constancy by discounting the illuminant through its double-opponent cells. This evidence is expected to not only help elucidate the visual mechanisms of color constancy but also inspire the development of more effective computer vision models.

https://arxiv.org/pdf/2412.07102

[5]

Speaker Effects in Spoken Language Comprehension,《Speaker Effects in Spoken Language Comprehension》

Hanlin Wu, Zhenguang G. Cai

Abstract

The identity of the speaker significantly influences spoken language comprehension by affecting perception and expectation. This review explores speaker effects, focusing on how speaker information impacts language processing. We propose an integrative model characterized by the interaction between a perception-driven bottom-up process guided by acoustic details and an expectation-driven top-down process guided by a speaker model. Acoustic details affect lower-level perception, while the speaker model modulates both lower and higher-level processes (such as meaning interpretation and pragmatic reasoning). We define speaker specificity and speaker demographic effects and demonstrate how bottom-up and top-down processes interact at various levels in different contexts. This framework contributes to psycholinguistic theory by comprehensively elucidating how speaker information interacts with language content to construct discourse. We argue that speaker effects can serve as indicators of language learners’ proficiency and individual social cognitive characteristics. We encourage future research to extend these findings to AI speakers, exploring the universality of speaker effects in human and AI subjects.

https://arxiv.org/pdf/2412.07238

[6]

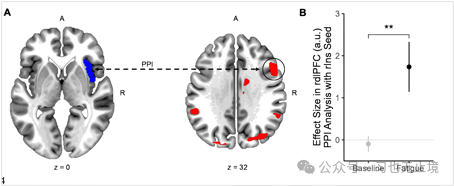

Neural Mechanisms Underlying the Effects of Cognitive Fatigue on Physical Effort-Based Decision-Making,《Neural Mechanisms Underlying the Effects of Cognitive Fatigue on Physical Effort-Based Decision-Making》

Michael Dryzer, Vikram S. Chib

Abstract

Fatigue is a state of exhaustion that affects our willingness to engage in effortful work. While both physical and cognitive activities can lead to fatigue, understanding how fatigue in one domain (e.g., cognitive) influences decisions in another domain (e.g., physical) is limited. We used functional magnetic resonance imaging (fMRI) to measure brain activity while human participants made decisions about anticipated physical activities before and after engaging in a cognitively fatiguing working memory task. Using computational modeling of choice behavior, we show that cognitive activity increases the subjective cost of physical activity for participants compared to baseline resting states. We describe how signals associated with dorsolateral prefrontal cortex fatigue from cognitive activity influence the calculation of physical activity values instantiated in the insula, thereby increasing individuals’ subjective evaluations of anticipated physical activities during cognitive fatigue. Our findings support the idea of a general fatigue signal that integrates effort-specific information to guide effort-based choices.

doi: https://doi.org/10.1101/2024.12.06.627274

[7]

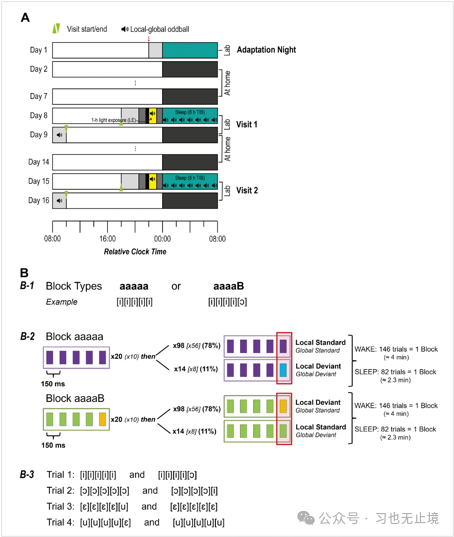

Reduced and Redundant: Information Processing of Prediction Errors During Sleep,《Reduced and Redundant: Information Processing of Prediction Errors During Sleep》

Christine Blume, Marina Dauphin, Maria Niedernhuber, Manuel Spitschan, Martin P Meyer, Christian Cajochen, Tristan Bekinschtein, Andres Canales-Johnson

Abstract

During sleep, the human brain transitions to a “sentinel processing mode,” continuing to process environmental stimuli even without consciousness. In addition to previous research, we also employ advanced information-theoretic analysis, including mutual information (MI) and co-information (co-I), as well as event-related potentials (ERP) and temporal generalization analysis (TGA), to describe auditory prediction error processing during awake and sleep states. We hypothesize that shared neural codes emerge during sleep stages, with deep sleep associated with reduced information content and increased information redundancy. To investigate this, 29 healthy young participants were exposed to auditory “local-global” oddball paradigms during wakefulness and continued during 8 hours of sleep monitored by polysomnography. We focus on the mismatch response to the fifth tone deviating from four standard tones. ERP analysis indicates that prediction error processing continues across all sleep stages (N1-N3, REM). Mutual information analysis reveals a significant reduction in the amount of prediction error information encoded during sleep, although the amplitude of ERPs increases with the deepening of NREM sleep. Furthermore, co-information analysis indicates that neural dynamics become increasingly redundant with deeper sleep. Temporal generalization analysis shows that there is a largely shared neural code between N2 and N3 sleep, although it differs between wakefulness and sleep. Here, we demonstrate how the neural code of the “sentinel processing mode” transitions from wakefulness to light and deep sleep and REM, characterized by increased redundancy and reduced richness of neural information as consciousness wanes. This altered stimulus processing reveals how neural information changes with shifts in states of consciousness as we traverse the night.

doi: https://doi.org/10.1101/2024.12.06.627143

[8]

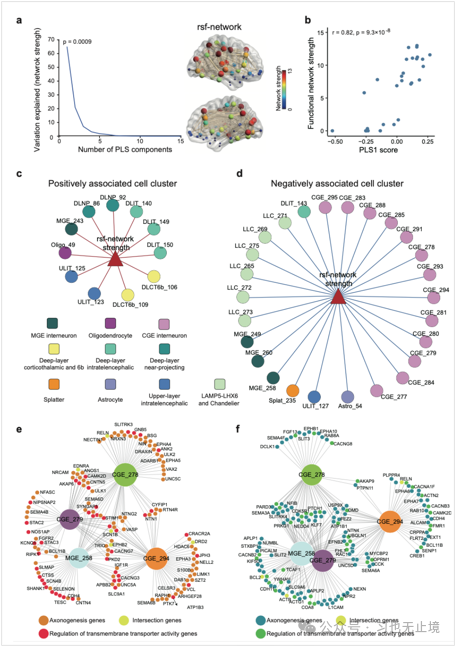

Cell Types Associated with Human Brain Functional Connectomes and Their Implications in Psychiatric Diseases,《Cell Types Associated with Human Brain Functional Connectomes and Their Implications in Psychiatric Diseases》

Pengxing Nie, Yafeng Zhan, Renrui Chen, Ruicheng Qi, Cirong Liu, Guang-Zhong Wang

Abstract

Cell types are the foundation of functional organization in the human brain, but the specific cellular clusters of functional connectomes remain unclear. Utilizing human whole-brain single-cell RNA sequencing data, we investigated the relationship between cortical cellular cluster distributions and functional connectomes. Our analysis identified dozens of cellular clusters significantly associated with resting-state network connectivity, with excitatory neurons primarily driving positive correlations and inhibitory neurons driving negative correlations. Many of these cellular clusters are also conserved in macaques. Notably, functional network connectivity is predicted through cellular communication between these clusters. We further identified cellular clusters associated with various neuropsychiatric diseases, with several clusters involved in multiple conditions. Comparative analysis of schizophrenia and autism spectrum disorder reveals different expression patterns, highlighting disease-specific cellular mechanisms. These findings emphasize the critical role of specific cellular clusters in shaping functional connectomes and their implications for neuropsychiatric diseases.

doi: https://doi.org/10.1101/2024.12.11.627878

Previous Collections

AI Artificial Intelligence|AI Neuroscience|Literature Review|AI Protein Design|Chinese-English Comparison|Learn While Listening|Biomarkers

Follow Me

Study hard and make progress every day!

Welcome to like and share to encourage support!