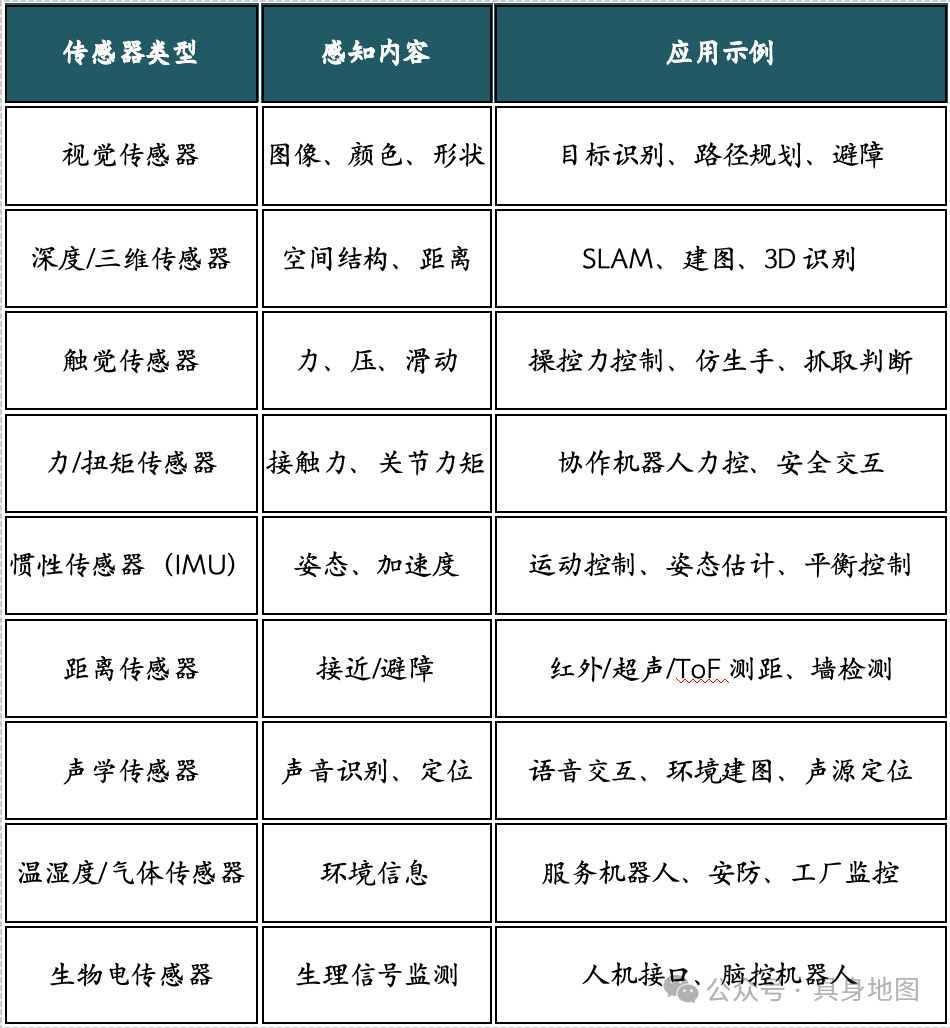

With the rapid development of artificial intelligence and robotics technology, robots are gradually evolving from “execution tools” to “perception and decision-making entities.” To complete tasks in complex and dynamic environments, robots rely on precise perception of the external world. Sensors serve as the bridge between robots and their environment.From simple infrared obstacle avoidance to complex LiDAR mapping, from force feedback in robotic arms to visual recognition in humanoid robots, sensor systems play a core role in the “vision, hearing, touch, and smell” of robots.This article will outline the common types of sensors, structural principles, application scenarios, and representative companies in robotic perception systems, and briefly discuss their development trends and challenges.1. Classification of SensorsBased on the perceived objects and types of physical signals, robotic sensors can be roughly classified into the following categories: Figure: Classification of Robot Sensors (Source: Embodied Map)2. Introduction to Major Sensors1. Visual SensorsVisual sensors (Cameras) are key components for robots to acquire image information, typically consisting of lenses, image sensor chips (CCD or CMOS), and image processing circuits.The technical principle involves collecting light signals through the lens, where light forms a charge distribution on the image sensor, which is then converted into digital image data through analog-to-digital conversion and signal processing. Visual sensors can identify features such as color, shape, position, and motion trajectory, serving as the foundation for tasks like object recognition, navigation obstacle avoidance, pose estimation, face recognition, and scene understanding.Visual sensors have been widely deployed in service robots, industrial robots, autonomous driving, and security inspections.Representative companies include Sony (global leader in CMOS image sensors), Basler (industrial cameras), Hikvision, Orbbec (structured light/ToF vision), and Intel (RealSense depth cameras).

Figure: Classification of Robot Sensors (Source: Embodied Map)2. Introduction to Major Sensors1. Visual SensorsVisual sensors (Cameras) are key components for robots to acquire image information, typically consisting of lenses, image sensor chips (CCD or CMOS), and image processing circuits.The technical principle involves collecting light signals through the lens, where light forms a charge distribution on the image sensor, which is then converted into digital image data through analog-to-digital conversion and signal processing. Visual sensors can identify features such as color, shape, position, and motion trajectory, serving as the foundation for tasks like object recognition, navigation obstacle avoidance, pose estimation, face recognition, and scene understanding.Visual sensors have been widely deployed in service robots, industrial robots, autonomous driving, and security inspections.Representative companies include Sony (global leader in CMOS image sensors), Basler (industrial cameras), Hikvision, Orbbec (structured light/ToF vision), and Intel (RealSense depth cameras). Figure: Visual Parameters of Yushu Robot (Source: Yushu Technology Official Website)2. Depth and 3D SensorsDepth and 3D sensors (such as ToF, structured light, and LiDAR) are used to obtain spatial distance information between robots and their environment, helping robots perceive the three-dimensional world.ToF (Time of Flight) sensors calculate depth by emitting light pulses and measuring their return time, typically consisting of an emission module, a receiving module, and a signal processing unit; structured light sensors project preset patterns onto object surfaces, capturing deformed patterns to infer distance information; LiDAR uses laser scanning of the surrounding environment to obtain point cloud data and construct three-dimensional maps, offering higher precision and ranging capabilities.These sensors are widely used in autonomous navigation, SLAM mapping, obstacle planning, 3D recognition, and spatial positioning tasks, becoming core perception devices in service robots, warehouse logistics robots, mobile robots (AMR), and autonomous driving systems.Representative companies include Velodyne, Ouster, Hesai Technology, SUTENG (LiDAR), Orbbec, Apple (structured light), Infineon, and STMicroelectronics (ToF).3. Tactile SensorsTactile sensors are key components that simulate the touch sensation of human skin, typically consisting of a sensitive layer (for pressure/shear perception), an electrode layer (for signal acquisition), and a flexible substrate layer, sometimes equipped with packaging and adhesive layers to enhance stability and wearability.Their technical principle is mainly based on resistance changes (piezoresistive), capacitance changes (capacitive), piezoelectric effects, or triboelectric effects, converting mechanical stimuli such as contact, pressure, and vibration into electrical signals.In robots, tactile sensors are widely used in flexible grasping, bionic hand perception, collaborative robot force control, and safe human-robot interaction, enhancing the robot’s sensitivity and feedback capability to the physical environment.Representative companies and research institutions include Stanford University (electronic skin), Seoul National University, Xenoma, Titan Deep Technology, and PaxiNi, with several domestic and international startups focusing on the development of high-resolution flexible tactile arrays and integrated solutions in recent years.

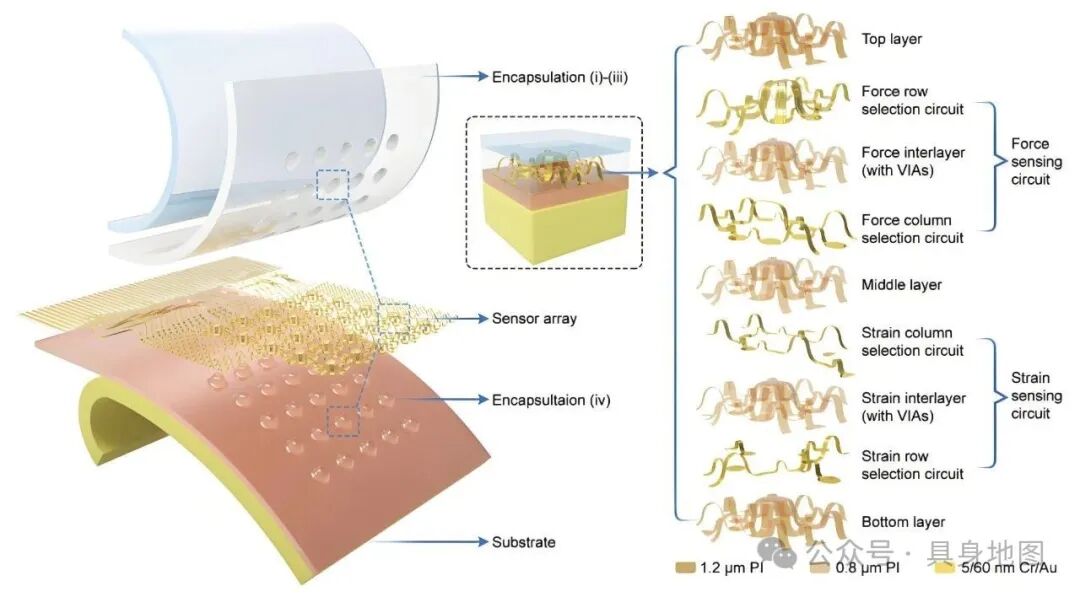

Figure: Visual Parameters of Yushu Robot (Source: Yushu Technology Official Website)2. Depth and 3D SensorsDepth and 3D sensors (such as ToF, structured light, and LiDAR) are used to obtain spatial distance information between robots and their environment, helping robots perceive the three-dimensional world.ToF (Time of Flight) sensors calculate depth by emitting light pulses and measuring their return time, typically consisting of an emission module, a receiving module, and a signal processing unit; structured light sensors project preset patterns onto object surfaces, capturing deformed patterns to infer distance information; LiDAR uses laser scanning of the surrounding environment to obtain point cloud data and construct three-dimensional maps, offering higher precision and ranging capabilities.These sensors are widely used in autonomous navigation, SLAM mapping, obstacle planning, 3D recognition, and spatial positioning tasks, becoming core perception devices in service robots, warehouse logistics robots, mobile robots (AMR), and autonomous driving systems.Representative companies include Velodyne, Ouster, Hesai Technology, SUTENG (LiDAR), Orbbec, Apple (structured light), Infineon, and STMicroelectronics (ToF).3. Tactile SensorsTactile sensors are key components that simulate the touch sensation of human skin, typically consisting of a sensitive layer (for pressure/shear perception), an electrode layer (for signal acquisition), and a flexible substrate layer, sometimes equipped with packaging and adhesive layers to enhance stability and wearability.Their technical principle is mainly based on resistance changes (piezoresistive), capacitance changes (capacitive), piezoelectric effects, or triboelectric effects, converting mechanical stimuli such as contact, pressure, and vibration into electrical signals.In robots, tactile sensors are widely used in flexible grasping, bionic hand perception, collaborative robot force control, and safe human-robot interaction, enhancing the robot’s sensitivity and feedback capability to the physical environment.Representative companies and research institutions include Stanford University (electronic skin), Seoul National University, Xenoma, Titan Deep Technology, and PaxiNi, with several domestic and international startups focusing on the development of high-resolution flexible tactile arrays and integrated solutions in recent years. Figure: 3DAE-Skin Structure from Zhang Yihui’s Research Group (Source: Internet)4. Force/Torque SensorsForce/torque sensors are used to measure the forces and torques acting on each joint or end effector of the robot, serving as core components for achieving high-precision force control, grasp stability, collision detection, and human-robot collaboration.Their structure typically includes elastic elements (such as metal structural components), strain gauges (such as strain gages), and signal conditioning and output modules. The working principle is generally based on strain gauges, resistance bridges, or optical fibers, sensing the force conditions at the robot’s joints or end effectors, and calculating the magnitude and direction of the applied force or torque.These sensors are widely used in collaborative robots, industrial robotic arms, surgical robots, and rehabilitation devices, especially indispensable in tasks requiring fine control and human collaboration.Representative companies include ATI Industrial Automation (a global leader in multi-axis force/torque sensor manufacturing), OnRobot, Bionics, Mecademic, and Futek, with ATI’s products widely used in mainstream robot brands such as ABB, KUKA, and Universal Robots.

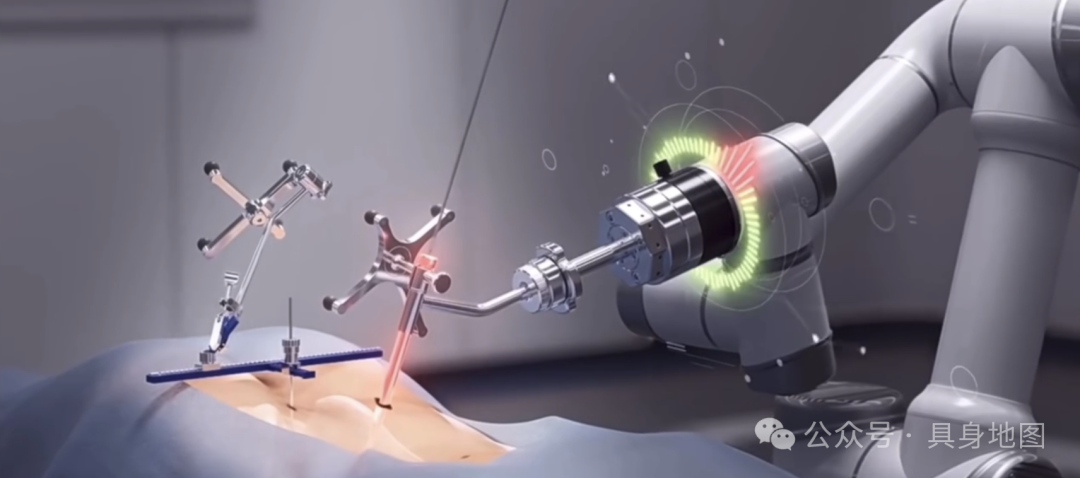

Figure: 3DAE-Skin Structure from Zhang Yihui’s Research Group (Source: Internet)4. Force/Torque SensorsForce/torque sensors are used to measure the forces and torques acting on each joint or end effector of the robot, serving as core components for achieving high-precision force control, grasp stability, collision detection, and human-robot collaboration.Their structure typically includes elastic elements (such as metal structural components), strain gauges (such as strain gages), and signal conditioning and output modules. The working principle is generally based on strain gauges, resistance bridges, or optical fibers, sensing the force conditions at the robot’s joints or end effectors, and calculating the magnitude and direction of the applied force or torque.These sensors are widely used in collaborative robots, industrial robotic arms, surgical robots, and rehabilitation devices, especially indispensable in tasks requiring fine control and human collaboration.Representative companies include ATI Industrial Automation (a global leader in multi-axis force/torque sensor manufacturing), OnRobot, Bionics, Mecademic, and Futek, with ATI’s products widely used in mainstream robot brands such as ABB, KUKA, and Universal Robots. Figure: End Effector Force Sensing (Source: Kunwei Technology Official Website)5. Inertial Measurement Unit (IMU)IMU (Inertial Measurement Unit) is an integrated sensor system, typically consisting of a three-axis gyroscope, a three-axis accelerometer (some high-end models also include a magnetometer), used to measure an object’s angular velocity, acceleration, and attitude changes in three-dimensional space.The technical principle is based on MEMS (Micro-Electro-Mechanical Systems) devices, detecting the inertial displacement or rotation of a mass block caused by Coriolis force, converting it into electrical signals to achieve attitude estimation and motion trajectory tracking.IMUs are widely used in robots, serving as key perception modules for navigation positioning, attitude control, motion planning, and stability maintenance. Particularly in mobile robots, drones, biped robots, and self-balancing systems, IMUs can be used in conjunction with vision or GPS to construct inertial navigation systems.Representative companies include Bosch Sensortec, STMicroelectronics (ST), Invensense (TDK), Analog Devices (ADI), Beike Tianhui, and Ruilei Wisdom.6. Distance/Obstacle SensorsDistance/obstacle sensors are the fundamental perception modules for robots to achieve safe movement and path planning, with common types including infrared, ultrasonic, LiDAR, and ToF depth sensors.Their basic structure includes emitters, receivers, and signal processing units, calculating the distance between the robot and obstacles by measuring the time difference or phase difference between emitted signals (light waves or sound waves) and reflected signals; some solutions can also achieve target recognition and dynamic tracking.These sensors are widely used in service robots, vacuum cleaning robots, AGV/AMR (automated guided vehicles), and collaborative robots, enabling autonomous obstacle avoidance, path correction, and boundary recognition.Representative companies include Hokuyo, SICK, SUTENG, Hesai Technology (LiDAR); Huawei, Orbbec (ToF); MaxBotix, Ultrasonic Sensors Inc. (ultrasonic), etc.7. Acoustic Sensors and Voice Perception SystemsAcoustic sensors and voice perception systems are the core components for robots to achieve hearing and human-robot voice interaction, mainly consisting of microphone arrays, signal processing chips, and voice recognition/noise reduction algorithm modules.Acoustic sensors collect sound signals from the environment through MEMS microphones, combining beamforming, sound source localization, and voice recognition algorithms to achieve functions such as voice command recognition, sound source tracking, and environmental sound perception. Some advanced systems can also perform voiceprint recognition, emotion analysis, and complex voice interactions.These systems are widely used in service robots, companion robots, smart speakers, intelligent customer service robots, and educational entertainment robots, significantly enhancing natural language interaction capabilities and human-robot integration.Representative companies include iFlytek, Sibo, Baidu, Google, Amazon (Alexa system), Knowles, and GoerTek, covering the complete industry chain from hardware microphones to entire voice interaction systems.8. Environmental Sensors (Temperature, Humidity, Gas, Light)Environmental sensors are used to detect environmental parameters around the robot, with common types including temperature and humidity sensors, gas sensors, light sensors, and pressure sensors.These sensors typically consist of sensitive elements (such as semiconductor materials, optoelectronic elements, chemical sensitive films, etc.) and signal conversion circuits, converting environmental factors into electrical signals through physical or chemical changes to achieve real-time monitoring of temperature, humidity, harmful gas concentrations, and light intensity.Environmental sensors are widely used in agricultural robots, environmental monitoring robots, industrial inspection robots, and smart home robots, helping robots perceive environmental changes and make adaptive adjustments or warnings.Representative companies include Honeywell, Bosch Sensortec, AMS, Figaro, Sensirion, and Huawei HiSilicon, covering multiple application levels from basic environmental perception to complex environmental analysis.3. Development Trends1. Clear Trend of Multimodal FusionCurrently, robotic perception systems are rapidly developing towards multimodal sensor fusion. By integrating data from various sensors such as vision, touch, voice, force control, and inertial measurement units (IMU), robots can achieve multidimensional understanding and perception of the environment and tasks, significantly enhancing semantic recognition, decision-making, and adaptability to complex tasks.For example, research teams from Stanford University and MIT have successfully achieved precise grasping and manipulation of unknown objects by fusing visual and tactile sensor data through deep learning.2. Rise of Flexible and Bionic SensorsWith advancements in flexible electronic materials, nanotechnology, and microstructure design, flexible sensors and electronic skin technology are gradually becoming the key underlying platform for the next generation of humanoid and bionic robots. These sensors not only possess high sensitivity and stretchability but also can achieve self-healing and self-repair functions, simulating the tactile, temperature, and pressure perception capabilities of human skin.For example, the self-healing electronic skin developed by Seoul National University based on two-dimensional materials has achieved over 90% mechanical performance recovery rate.3. AI Perception Collaboration and Edge ComputingThe deep integration of robotic sensors with AI algorithms, especially in collaborative operation on edge computing platforms, is becoming a key path to enhance the real-time performance and intelligence of perception systems.Edge computing chips such as NVIDIA Jetson series, Google Edge TPU, and Cambrian chips can locally complete complex sensor data preprocessing, feature extraction, and model inference, reducing data transmission delays and privacy leakage risks, ensuring rapid response and decision-making capabilities of robots in dynamic and complex environments.For robots to truly “see, hear, feel, and understand the world,” deep integration and intelligent processing of various sensors are essential. With continuous breakthroughs in flexible electronics, AI chips, and integrated processes, the next generation of intelligent robots will be equipped with more powerful “artificial sensory systems.” From manufacturing and logistics to healthcare and home, robotic perception systems will become a key technological track and innovation engine.

Figure: End Effector Force Sensing (Source: Kunwei Technology Official Website)5. Inertial Measurement Unit (IMU)IMU (Inertial Measurement Unit) is an integrated sensor system, typically consisting of a three-axis gyroscope, a three-axis accelerometer (some high-end models also include a magnetometer), used to measure an object’s angular velocity, acceleration, and attitude changes in three-dimensional space.The technical principle is based on MEMS (Micro-Electro-Mechanical Systems) devices, detecting the inertial displacement or rotation of a mass block caused by Coriolis force, converting it into electrical signals to achieve attitude estimation and motion trajectory tracking.IMUs are widely used in robots, serving as key perception modules for navigation positioning, attitude control, motion planning, and stability maintenance. Particularly in mobile robots, drones, biped robots, and self-balancing systems, IMUs can be used in conjunction with vision or GPS to construct inertial navigation systems.Representative companies include Bosch Sensortec, STMicroelectronics (ST), Invensense (TDK), Analog Devices (ADI), Beike Tianhui, and Ruilei Wisdom.6. Distance/Obstacle SensorsDistance/obstacle sensors are the fundamental perception modules for robots to achieve safe movement and path planning, with common types including infrared, ultrasonic, LiDAR, and ToF depth sensors.Their basic structure includes emitters, receivers, and signal processing units, calculating the distance between the robot and obstacles by measuring the time difference or phase difference between emitted signals (light waves or sound waves) and reflected signals; some solutions can also achieve target recognition and dynamic tracking.These sensors are widely used in service robots, vacuum cleaning robots, AGV/AMR (automated guided vehicles), and collaborative robots, enabling autonomous obstacle avoidance, path correction, and boundary recognition.Representative companies include Hokuyo, SICK, SUTENG, Hesai Technology (LiDAR); Huawei, Orbbec (ToF); MaxBotix, Ultrasonic Sensors Inc. (ultrasonic), etc.7. Acoustic Sensors and Voice Perception SystemsAcoustic sensors and voice perception systems are the core components for robots to achieve hearing and human-robot voice interaction, mainly consisting of microphone arrays, signal processing chips, and voice recognition/noise reduction algorithm modules.Acoustic sensors collect sound signals from the environment through MEMS microphones, combining beamforming, sound source localization, and voice recognition algorithms to achieve functions such as voice command recognition, sound source tracking, and environmental sound perception. Some advanced systems can also perform voiceprint recognition, emotion analysis, and complex voice interactions.These systems are widely used in service robots, companion robots, smart speakers, intelligent customer service robots, and educational entertainment robots, significantly enhancing natural language interaction capabilities and human-robot integration.Representative companies include iFlytek, Sibo, Baidu, Google, Amazon (Alexa system), Knowles, and GoerTek, covering the complete industry chain from hardware microphones to entire voice interaction systems.8. Environmental Sensors (Temperature, Humidity, Gas, Light)Environmental sensors are used to detect environmental parameters around the robot, with common types including temperature and humidity sensors, gas sensors, light sensors, and pressure sensors.These sensors typically consist of sensitive elements (such as semiconductor materials, optoelectronic elements, chemical sensitive films, etc.) and signal conversion circuits, converting environmental factors into electrical signals through physical or chemical changes to achieve real-time monitoring of temperature, humidity, harmful gas concentrations, and light intensity.Environmental sensors are widely used in agricultural robots, environmental monitoring robots, industrial inspection robots, and smart home robots, helping robots perceive environmental changes and make adaptive adjustments or warnings.Representative companies include Honeywell, Bosch Sensortec, AMS, Figaro, Sensirion, and Huawei HiSilicon, covering multiple application levels from basic environmental perception to complex environmental analysis.3. Development Trends1. Clear Trend of Multimodal FusionCurrently, robotic perception systems are rapidly developing towards multimodal sensor fusion. By integrating data from various sensors such as vision, touch, voice, force control, and inertial measurement units (IMU), robots can achieve multidimensional understanding and perception of the environment and tasks, significantly enhancing semantic recognition, decision-making, and adaptability to complex tasks.For example, research teams from Stanford University and MIT have successfully achieved precise grasping and manipulation of unknown objects by fusing visual and tactile sensor data through deep learning.2. Rise of Flexible and Bionic SensorsWith advancements in flexible electronic materials, nanotechnology, and microstructure design, flexible sensors and electronic skin technology are gradually becoming the key underlying platform for the next generation of humanoid and bionic robots. These sensors not only possess high sensitivity and stretchability but also can achieve self-healing and self-repair functions, simulating the tactile, temperature, and pressure perception capabilities of human skin.For example, the self-healing electronic skin developed by Seoul National University based on two-dimensional materials has achieved over 90% mechanical performance recovery rate.3. AI Perception Collaboration and Edge ComputingThe deep integration of robotic sensors with AI algorithms, especially in collaborative operation on edge computing platforms, is becoming a key path to enhance the real-time performance and intelligence of perception systems.Edge computing chips such as NVIDIA Jetson series, Google Edge TPU, and Cambrian chips can locally complete complex sensor data preprocessing, feature extraction, and model inference, reducing data transmission delays and privacy leakage risks, ensuring rapid response and decision-making capabilities of robots in dynamic and complex environments.For robots to truly “see, hear, feel, and understand the world,” deep integration and intelligent processing of various sensors are essential. With continuous breakthroughs in flexible electronics, AI chips, and integrated processes, the next generation of intelligent robots will be equipped with more powerful “artificial sensory systems.” From manufacturing and logistics to healthcare and home, robotic perception systems will become a key technological track and innovation engine.