© Author | Wen Jiaxin

Affiliation | Master’s Student at Tsinghua UniversityWhat form should large models take for inference? Is natural language the best way to represent the reasoning path?In September 2024, OpenAI sounded the horn for a reasoning revolution with the o1 model, refreshing cognitive boundaries with astonishing chain lengths of thought. In this technological revolution, Chinese power has rapidly risen: DeepSeek R1 successfully replicated o1 performance at an extremely low training cost, sparking global discussions. However, behind these exciting results, the aforementioned “soul-searching questions” seem to remain unanswered.In fact, prior to the arrival of this wave of inference, the research team behind this article had been contemplating and discussing these issues. Although the reasoning paradigm in natural language has dominated the construction of reasoning models since the inception of thought chains, it has inherent flaws that cannot be ignored: common systemic issues such as logical breaks, focus shifts, and redundant repetitions often occur during the reasoning process. This is akin to a knowledgeable student who lacks systematic training, possessing abundant knowledge but insufficient logic.The research team believes these issues stem from the dual nature of natural language: it is free and flexible in expression but difficult to convey rigorous structured thinking. The more fundamental challenge lies in the fact that the reasoning structures embedded in the text are often buried beneath the redundant expressions of natural language. These implicit logical patterns are difficult for models to effectively capture and reuse. This dilemma is even more severe for models with smaller parameter counts.To address this dilemma, the research team proposed the CodePlan method at ICLR 2025.This innovative framework introduces “Code-Form Planning” into the inference process, allowing large models to first think using “programming thinking” and then express it in natural language..Thanks to the rigorous characteristics of programming languages, code planning can accurately construct reasoning blueprints that include conditional branches, loop iterations, function calls, etc., akin to equipping large models with a logically rigorous “operating system”.Interestingly, due to the vast amount of data available in programming languages, this method does not require heavy manual annotation and can automatically extract implicit planning signals from existing data; moreover, since existing code covers problems across various domains, CodePlan not only addresses complex reasoning issues but also generalizes well to other tasks.In 13 challenging benchmark tests,CodePlan achieved an average relative performance improvement of 25.1%. Currently, the research team has open-sourced 2 million pieces of reasoning data containing code-form planning, aiming to promote research in this direction.

Paper Title:

CodePlan: Unlocking Reasoning Potential in Large Language Models by Scaling Code-form Planning

Paper Link:

https://arxiv.org/pdf/2409.12452

Code Link:https://github.com/thu-coai/CodePlanDataset Link:

https://huggingface.co/datasets/jiaxin-wen/CodePlan

The Achilles’ Heel of Reasoning AbilityBehind the rapid advancements in large model reasoning capabilities lies a neglected phenomenon: as researchers continuously pursue larger parameter scales and more massive data volumes, the phenomenon of “thinking entropy increase” in models has become increasingly severe.This abnormal phenomenon manifests in two main aspects: first, reasoning over-expansion, where even simple questions like “2+3=?” lead the o1 model to generate lengthy thought chains of over 200 tokens; second, insufficient focus in reasoning, where the model frequently jumps between different ideas when solving complex problems but fails to delve deeply into any direction to arrive at the correct answer.This phenomenon exposes a fundamental contradiction in the current technical route:The inherent unstructured nature of natural language conflicts with the rigorous planning framework required for systematic reasoning..In-depth analysis of this phenomenon reveals that existing reasoning models primarily rely on two steps: first, randomly exploring and generating massive reasoning paths through natural language forms, and then using reinforcement learning algorithms to filter out quality trajectories from them.This method, while broadening the exploration space of reasoning, is akin to aimlessly searching for an oasis in a vast desert; the lack of an effective navigation mechanism inevitably leads to inefficiency. More critically, this free reasoning approach based on natural language fails to crystallize reusable structured knowledge, resulting in the model needing to start from scratch each time it faces a new problem.Therefore, while existing methods have cultivated the model’s strong intuitive capabilities, they overlook the most essential feature of human thinking—the ability to systematize scattered knowledge through high-level planning.

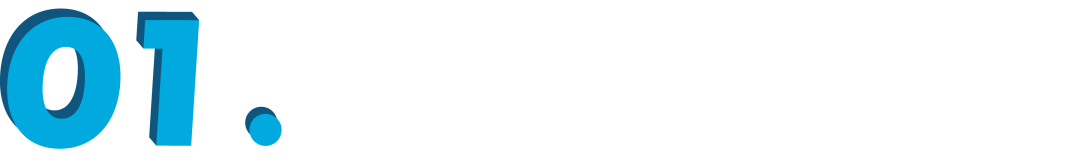

The Achilles’ Heel of Reasoning AbilityBehind the rapid advancements in large model reasoning capabilities lies a neglected phenomenon: as researchers continuously pursue larger parameter scales and more massive data volumes, the phenomenon of “thinking entropy increase” in models has become increasingly severe.This abnormal phenomenon manifests in two main aspects: first, reasoning over-expansion, where even simple questions like “2+3=?” lead the o1 model to generate lengthy thought chains of over 200 tokens; second, insufficient focus in reasoning, where the model frequently jumps between different ideas when solving complex problems but fails to delve deeply into any direction to arrive at the correct answer.This phenomenon exposes a fundamental contradiction in the current technical route:The inherent unstructured nature of natural language conflicts with the rigorous planning framework required for systematic reasoning..In-depth analysis of this phenomenon reveals that existing reasoning models primarily rely on two steps: first, randomly exploring and generating massive reasoning paths through natural language forms, and then using reinforcement learning algorithms to filter out quality trajectories from them.This method, while broadening the exploration space of reasoning, is akin to aimlessly searching for an oasis in a vast desert; the lack of an effective navigation mechanism inevitably leads to inefficiency. More critically, this free reasoning approach based on natural language fails to crystallize reusable structured knowledge, resulting in the model needing to start from scratch each time it faces a new problem.Therefore, while existing methods have cultivated the model’s strong intuitive capabilities, they overlook the most essential feature of human thinking—the ability to systematize scattered knowledge through high-level planning. ▲ CodePlan achieves significant improvements across 5 core reasoning tasks. Vanilla: baseline method without planning; Natural Language Plan: planning method based on natural language.

▲ CodePlan achieves significant improvements across 5 core reasoning tasks. Vanilla: baseline method without planning; Natural Language Plan: planning method based on natural language.

CodePlan: Equipping Large Models with a Structured Thinking Engine

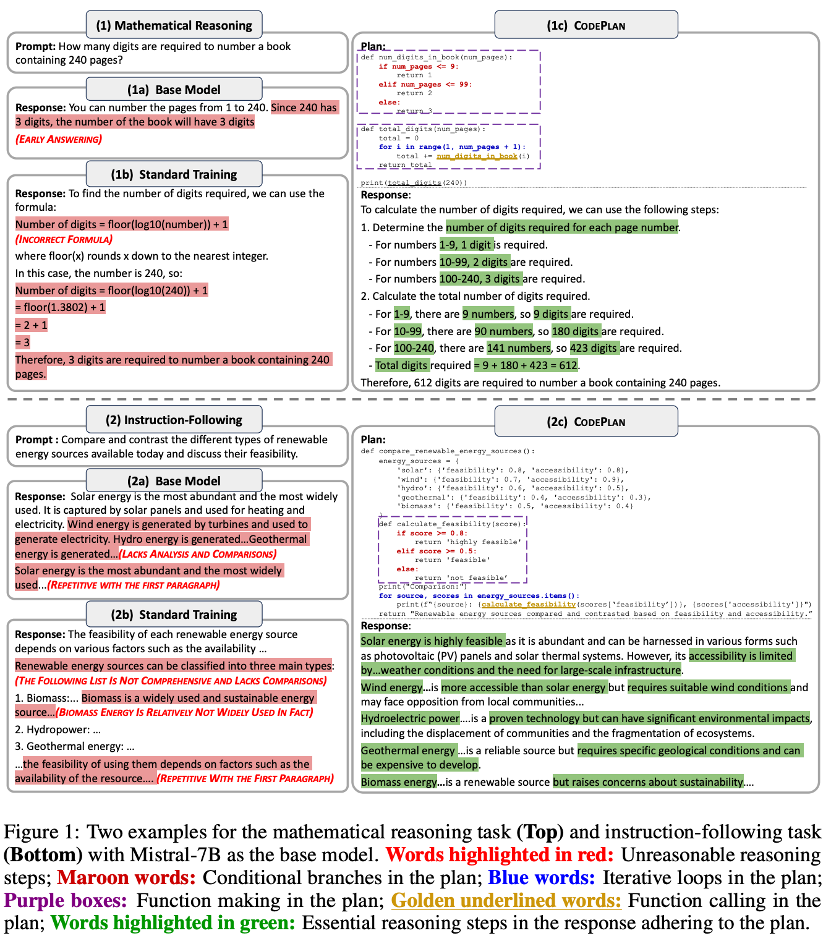

In the face of the bottleneck in large model reasoning capabilities, the research team proposed the CodePlan framework, whose core innovation lies in introducing “Code-Form Planning” as an intermediate representation of thought.This innovation is built on the precise expression of reasoning structures. By incorporating the rigorous structure of programming languages into the reasoning process, CodePlan constructs a reliable “thinking operating system” for large models. This system achieves structured thinking through two levels: first outlining a high-level reasoning framework using Python-style pseudocode; then systematically unfolding specific reasoning steps based on this framework.As shown in the figure below, this code-based expression method has four core advantages:

- Conditional Branching Capability: Dynamically adjust reasoning paths through if statements, achieving flexible context adaptation;

- Loop Iteration Structure: Efficiently handle sequential data and repetitive operations using for loops;

- Modular Tools: Enhance the model’s ability to create and use tools through function definitions and calls;

- Hierarchical Architecture: Support modular decomposition of complex reasoning tasks through variable definitions, sub-task breakdowns, and rigorous logical arrangements.

Compared to traditional natural language planning, CodePlan’s advantages are prominent. Python code can convey planning information in a more concise manner, and this expression method is more widely distributed in pre-training corpora, allowing the model to establish a deep understanding of code structures during the training phase.This innate “code literacy” enables the model to generate and understand planning information more naturally, significantly reducing the learning cost. More importantly, this planning method demonstrates remarkable versatility—ranging from mathematical reasoning to instruction understanding, from symbolic computation to open-ended questions, it can construct clear code-form planning representations.

Compared to traditional natural language planning, CodePlan’s advantages are prominent. Python code can convey planning information in a more concise manner, and this expression method is more widely distributed in pre-training corpora, allowing the model to establish a deep understanding of code structures during the training phase.This innate “code literacy” enables the model to generate and understand planning information more naturally, significantly reducing the learning cost. More importantly, this planning method demonstrates remarkable versatility—ranging from mathematical reasoning to instruction understanding, from symbolic computation to open-ended questions, it can construct clear code-form planning representations.

Broadly Enhancing Model Reasoning Capabilities

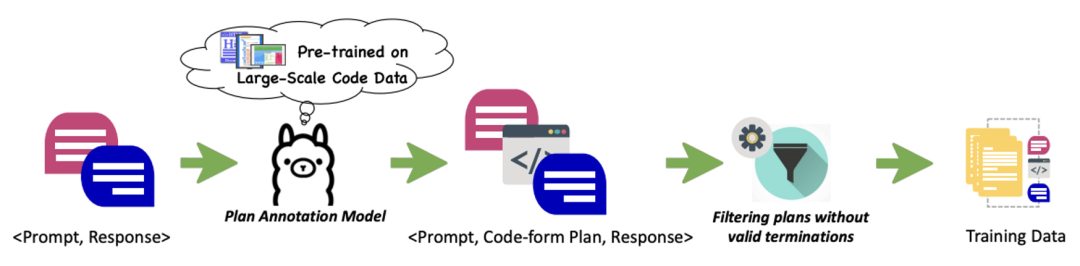

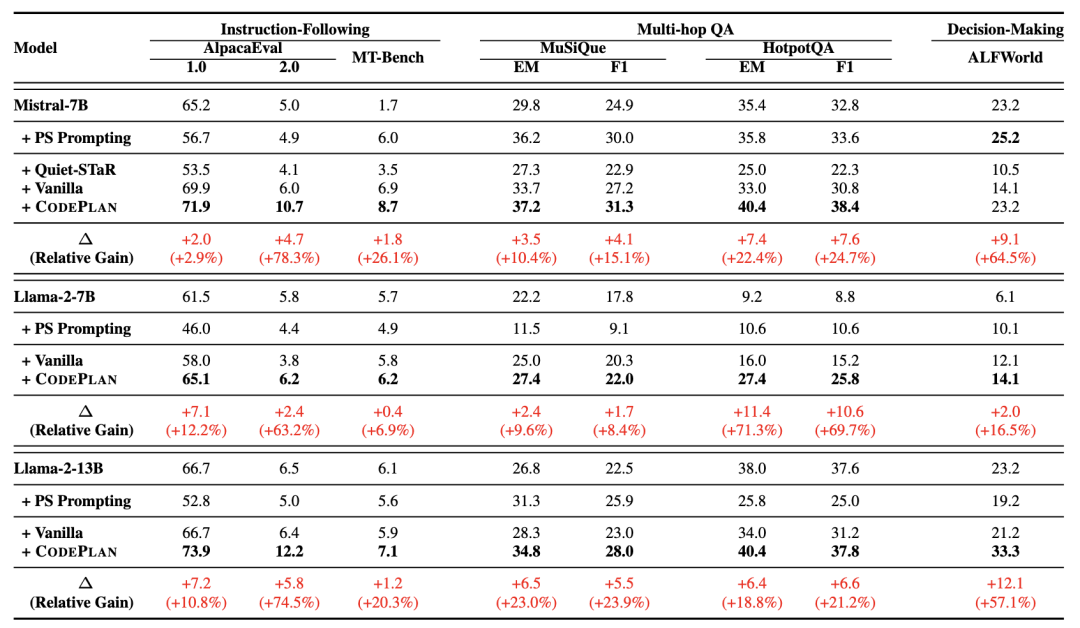

To validate the effectiveness of CodePlan, the research team constructed an efficient automatic mining method for planning information. As shown in the figure below, this method includes two key innovations: first, accurately parsing the hidden reasoning structures in text through code pre-training models, transforming them into explicit pseudocode representations; second, designing a dynamic filtering mechanism based on heuristic scoring to ensure the quality of the extracted plans.Based on this method, the team successfullyconstructed a large-scale dataset containing 2 million “< User Prompt, Code Planning, Response >” triples.  ▲ Training data construction processExperimental results are encouraging. The research team conducted systematic evaluations using Mistral and Llama as base models across 13 challenging benchmark tests spanning five domains: mathematical reasoning, symbolic computation, instruction understanding, multi-hop question answering, and decision-making.Results show that compared to baseline methods (Vanilla) that directly generate reasoning steps from user instructions and traditional methods (PS Prompting) that use natural language planning, CodePlan achieved significant improvements across all tasks.Especially in tasks with higher complexity, the performance improvement is more pronounced. For example, in the Last Letter task, the accuracy of Mistral-7B improved by over 20 percentage points, demonstrating CodePlan’s unique advantages in handling difficult reasoning problems.

▲ Training data construction processExperimental results are encouraging. The research team conducted systematic evaluations using Mistral and Llama as base models across 13 challenging benchmark tests spanning five domains: mathematical reasoning, symbolic computation, instruction understanding, multi-hop question answering, and decision-making.Results show that compared to baseline methods (Vanilla) that directly generate reasoning steps from user instructions and traditional methods (PS Prompting) that use natural language planning, CodePlan achieved significant improvements across all tasks.Especially in tasks with higher complexity, the performance improvement is more pronounced. For example, in the Last Letter task, the accuracy of Mistral-7B improved by over 20 percentage points, demonstrating CodePlan’s unique advantages in handling difficult reasoning problems.

1. The More Complex the Task, the More Significant the ImprovementIn-depth analysis of experimental results reveals a remarkable feature of CodePlan: as task complexity increases, its performance advantages become more pronounced. The research team conducted a detailed analysis using the multi-hop question answering task, dividing the dataset by reasoning steps (2 hops, 3 hops, 4 hops) to clearly demonstrate this pattern.

1. The More Complex the Task, the More Significant the ImprovementIn-depth analysis of experimental results reveals a remarkable feature of CodePlan: as task complexity increases, its performance advantages become more pronounced. The research team conducted a detailed analysis using the multi-hop question answering task, dividing the dataset by reasoning steps (2 hops, 3 hops, 4 hops) to clearly demonstrate this pattern. ▲ Performance comparison in multi-hop question answering tasksAs shown in the figure above, in relatively simple 2-hop questions, CodePlan already shows stable improvements compared to the baseline model; while in complex problems requiring more than three reasoning jumps, the performance gap widens dramatically. Particularly in the most challenging 4-hop problems, CodePlan’s advantages peak, fully demonstrating its exceptional capabilities in handling deep reasoning.This “the harder, the stronger” characteristic stems from CodePlan’s structured reasoning framework. By decomposing complex reasoning processes into clear code steps, the model can better manage long-range dependencies, avoiding common logical breaks and attention diversion issues in traditional methods during multi-step reasoning.2. More Efficient and Stable Post-TrainingWhile exploring the training characteristics of CodePlan, the research team discovered another important advantage: it provides a more efficient and reliable path for post-training large models.

▲ Performance comparison in multi-hop question answering tasksAs shown in the figure above, in relatively simple 2-hop questions, CodePlan already shows stable improvements compared to the baseline model; while in complex problems requiring more than three reasoning jumps, the performance gap widens dramatically. Particularly in the most challenging 4-hop problems, CodePlan’s advantages peak, fully demonstrating its exceptional capabilities in handling deep reasoning.This “the harder, the stronger” characteristic stems from CodePlan’s structured reasoning framework. By decomposing complex reasoning processes into clear code steps, the model can better manage long-range dependencies, avoiding common logical breaks and attention diversion issues in traditional methods during multi-step reasoning.2. More Efficient and Stable Post-TrainingWhile exploring the training characteristics of CodePlan, the research team discovered another important advantage: it provides a more efficient and reliable path for post-training large models. ▲ CodePlan’s training curveAs shown in the figure above, in the representative tasks of GSM8K mathematical reasoning and MuSiQue multi-hop question answering, CodePlan demonstrates significant training advantages. Traditional post-training methods (blue line) exhibit noticeable performance fluctuations during training. In contrast, CodePlan (orange line) not only achieves faster performance improvements but also maintains a stable upward trend.This phenomenon reveals CodePlan’s core advantage: by introducing structured code planning as an intermediate representation, it successfully establishes a more universal learning framework.This framework effectively reduces expression differences between different tasks, allowing the model to focus more on learning essential reasoning patterns, thereby achieving efficient knowledge transfer and stable accumulation. This not only improves training efficiency but also provides a reliable guarantee for the continuous evolution of large model capabilities.3. Case Analysis: Simplifying Complexity with Structured ThinkingLet’s take a look at the “Numerical Comparison” (which is larger, 9.8 or 9.11) and “Letter Counting” (counting the occurrences of letter r in strawberry) problems, which seem simple but often stump models.

▲ CodePlan’s training curveAs shown in the figure above, in the representative tasks of GSM8K mathematical reasoning and MuSiQue multi-hop question answering, CodePlan demonstrates significant training advantages. Traditional post-training methods (blue line) exhibit noticeable performance fluctuations during training. In contrast, CodePlan (orange line) not only achieves faster performance improvements but also maintains a stable upward trend.This phenomenon reveals CodePlan’s core advantage: by introducing structured code planning as an intermediate representation, it successfully establishes a more universal learning framework.This framework effectively reduces expression differences between different tasks, allowing the model to focus more on learning essential reasoning patterns, thereby achieving efficient knowledge transfer and stable accumulation. This not only improves training efficiency but also provides a reliable guarantee for the continuous evolution of large model capabilities.3. Case Analysis: Simplifying Complexity with Structured ThinkingLet’s take a look at the “Numerical Comparison” (which is larger, 9.8 or 9.11) and “Letter Counting” (counting the occurrences of letter r in strawberry) problems, which seem simple but often stump models. As shown in the table above, CodePlan elegantly solves these problems by introducing code-form planning. In stark contrast, models without planning assistance often provide vague or incorrect answers. They either jump to conclusions directly or get caught in lengthy but inaccurate explanations, reflecting the limitations of lacking a systematic thinking approach.This comparison indicates that CodePlan does not simply tell the model “what to do” but teaches the model “how to think”. By breaking down complex tasks into clear code steps, CodePlan provides the model with a reliable problem-solving paradigm.

As shown in the table above, CodePlan elegantly solves these problems by introducing code-form planning. In stark contrast, models without planning assistance often provide vague or incorrect answers. They either jump to conclusions directly or get caught in lengthy but inaccurate explanations, reflecting the limitations of lacking a systematic thinking approach.This comparison indicates that CodePlan does not simply tell the model “what to do” but teaches the model “how to think”. By breaking down complex tasks into clear code steps, CodePlan provides the model with a reliable problem-solving paradigm.

Conclusion: Pioneering New Approaches to Structured Thinking in Large Models

The introduction of CodePlan provides a new approach for the development of reasoning capabilities in large models. This innovation successfully addresses the structural flaws in natural language expression by incorporating code-form planning into the reasoning process; more importantly, it pioneers a new methodology that injects systematic problem-solving capabilities into large models.By open-sourcing 2 million pieces of planning data, the research team contributes resources to the entire community. Based on this, we look forward to more exciting application breakthroughs in high-demand scenarios such as finance and healthcare.