Configuring Different Learning Rates: Can LoRA Improve Further?

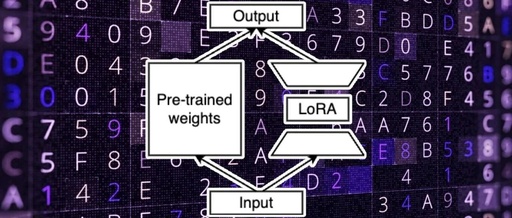

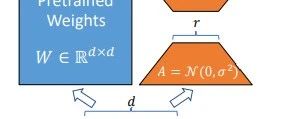

©PaperWeekly Original · Author | Su Jianlin Unit | Dark Side of the Moon Research Direction | NLP, Neural Networks LoRA (Low-Rank Adaptation) is one of the parameter-efficient fine-tuning methods for current LLMs. Previously, we briefly discussed it in “LoRA from a Gradient Perspective: Introduction, Analysis, Speculation, and Promotion”. In this article, we will learn … Read more