Printed Circuit Boards (PCBs) are independent modules made up of interconnected electronic components, found in everyday electronic devices such as mobile phones, routers, and personal computers, as well as in complex systems like radars, missiles, and satellites. Their brilliant designs are often hidden by the device’s casing, and the genius behind the design is frequently overlooked by users until a device malfunctions or requires functional expansion, at which point the beauty of the PCB is revealed upon opening the device casing.

Prehistoric Era of PCB

The origins of electronics can be traced back to 1897 when Joseph Thomson discovered the existence of electrons. Electronics developed on the foundations of early electromagnetism and electrical engineering. Before the birth of electronics, humans had already conducted in-depth studies on electromagnetic phenomena. A series of physical laws had been established, such as Coulomb’s Law, Ampère’s Law, Ohm’s Law, Lenz’s Law, and Faraday’s Law of Electromagnetic Induction.

On June 13, 1831, the great James Clerk Maxwell was born at 14 India Street, Edinburgh, UK. Maxwell is widely regarded as one of the most influential physicists of the 19th century, significantly impacting the advancements in physics in the early 20th century. In his 1864 paper, “A Dynamical Theory of the Electromagnetic Field,” he synthesized previous research in electromagnetism, proposing that electric and magnetic fields propagate through space in the form of waves at the speed of light. He theorized that light is an electromagnetic disturbance that causes various phenomena in the same medium’s electric and magnetic fields, and he theoretically predicted the existence of electromagnetic waves, unifying electricity, magnetism, and light under the phenomenon of the electromagnetic field. He established the famous Maxwell equations, laying a strong theoretical foundation for the application of electromagnetism in production and life.

Electromagnetism is the most important discipline in modern technological life. Its rapid development has ushered humanity into the electrical and information age. It can be said that without the development of electromagnetism, there would be no modern civilization; the achievements of this natural science theory laid the foundation for modern power, electronics, and radio industries. Of course, without Maxwell, there would be no PCB, and there would be no PCB layout industry. Perhaps the older Wu is still the most stylish guy selling pork at the village entrance.

With the support of electromagnetic theory, humans have also reached a certain level of utilizing electromagnetism. The invention of the wired telegraph and telephone followed, leading to the establishment of telegraph and telephone lines across the American continent and the transatlantic submarine cable. Alexander Graham Bell invented the telephone in 1876, Thomas Edison invented the incandescent lamp in 1879, and Nikola Tesla invented the electric motor in 1888. All these inventions prepared sufficient conditions for the birth of electronics.

Two significant historical events marked the birth of electronics: the discovery of the Edison effect and the experimental verification of the existence of electromagnetic waves.

In 1883, Edison, while working to extend the life of carbon filament lamps, accidentally discovered that current flowed between the filament and an electrode with positive voltage, while no current flowed when a negative voltage was applied. This is the Edison effect, which later led to the invention of the electron tube.

In 1887, German physicist H.R. Hertz conducted an experiment using a spark gap to excite a loop antenna, receiving with another loop antenna with a gap, confirming Maxwell’s prediction of the existence of electromagnetic waves. This important experiment led to the invention of wireless telegraphy.

The invention of wireless telegraphy was the first significant achievement of humanity in utilizing electromagnetic waves, marking the beginning of a prosperous period of research and application of electromagnetic waves in electronics.

Electromagnetic waves are the cornerstone of communication between electronic components, and the invention of the electron tube gave birth to the PCB.

In 1897, German scientist Braun manufactured the first vacuum tube, marking the beginning of the vacuum tube era in electronics. Essentially, Braun’s vacuum tube was just a prototype of the cathode ray tube, with limited functionality.

In 1904, British inventor John Fleming invented the vacuum diode, composed of a heated filament that emits electrons and a screen that receives them. When a positive voltage is applied to the screen, current flows; when negative voltage is applied, no current flows.

By 1906, De Forest added a grid to the vacuum tube and invented the triode, which could control the flow of electrons, providing the powerful function of amplifying signals and controlling electrical quantities. This advancement brought electronic circuit technology into practical application, promoting the development of wireless and other electronic industries. In the following 20 years, various electronic devices continued to emerge, and De Forest was hailed as the “Father of Radio,” “Grandfather of Television,” and “Father of the Electron Tube.”

The electron tube was the first generation of electronic devices. For nearly half a century before the invention of the transistor, the electron tube was almost the only available electronic device in various electronic equipment. Many subsequent achievements in electronics, such as the invention of television, radar, and computers, are inseparable from the electron tube. Even in today’s thriving solid-state electronics, vacuum electronics, represented by high-power electron tubes (especially microwave power tubes) and electron beam tubes, remain an active field.

From the pictures above, we can see how troublesome and inefficient it was to produce an electronic device before PCB technology was widely applied. A large number of electron tubes required manual wiring and soldering with insulated resin-coated wires between components, leading to several issues:

-

Manual wiring is inefficient and cannot achieve mechanized mass production.

-

Manual wiring is prone to installation errors, making inspection difficult.

-

The reliability of terminal soldering is low, easily loosening and causing poor contact.

To simplify the production of electronic machines, reduce wiring between electronic components, lower production costs, and improve the reliability of electronic machines, researchers began exploring methods to replace wiring with printing techniques to achieve precision mass production using machines.

The Birth and Development of Printed Circuit Boards

After Faraday published his law of electromagnetic induction in 1831, people began researching how to utilize electromagnetic principles for long-distance communication. Samuel Morse invented the telegraph in 1837, and Bell obtained the patent for the telephone in 1876. By 1904, there were 3 million telephones in the United States that required manual telephone exchange connections.

Printed circuit boards also emerged with the development of electronic connection systems to solve the connection issues in telegraph/telephone systems. Initially, metal strips or rods were used to connect large electronic components mounted on wooden bases. Over time, metal strips were replaced by screw terminals and cables that could be screwed in, providing greater flexibility, while wooden bases were replaced by metal bases. However, with the expansion of telegraph/telephone services, the number of telephone exchange lines increased, and the electronic operations related to telephone systems became more complex, necessitating smaller and more compact designs.

The image shows the manual telephone exchange at 14 Hankou Road, operated by British company Huayang Telegraph Company in 1907.

The Cradle Period of Printed Circuit Boards

The earliest patent related to PCBs dates back to 1903 when a famous German inventor named Albert Hanson applied for a British patent. He pioneered the concept of using “lines” in telephone switch systems, employing metal foils cut into conductive lines, and then adhering wax paper on both sides of the line conductors, creating conductive holes at the junctions to achieve electrical interconnection between different layers. This method is distinctly different from modern PCB manufacturing techniques, as phenolic resin had not yet been invented, and chemical etching technology was still immature. Albert Hanson’s invention can be seen as the prototype of modern PCB manufacturing.

In 1907, American chemist Leo Hendrik Baekeland improved the production technology of phenolic resin, making the resin practical and industrialized. This also created the necessary conditions for the emergence and development of printed circuit boards.

In the 1920s, early PCB materials were diverse, ranging from Bakelite (the aforementioned phenolic resin) to ordinary old plywood. Holes could be drilled into the materials, and flat copper wires could be riveted onto them. The appearance may not have been very attractive, but the concept of printed circuit boards was born from this period. These circuit boards were mainly used in radios and phonographs.

The Invention of Printed Circuit Boards

Recall the earlier mention of Hertz; after confirming Maxwell’s predictions about electromagnetic waves in 1887, by the 1920s, radio had attracted global attention, and electron tube technology had matured to the point where radio broadcasting could begin. The rapid manufacturing of radios also promoted the evolution of printed circuit board technologies.

In 1925, American Charles Ducas printed circuit patterns on an insulated substrate and successfully established conductors for wiring through electroplating. At this time, the term “PCB” was born. This method made the manufacturing of electrical devices easier.

In 1936, Austrian Paul Eisler published foil technology in the UK, using printed circuit boards in a radio device. Also in 1936, Japanese inventor Kiyoshi Miyamoto successfully patented the spray attachment method for wiring. Among the two, Paul Eisler’s method is most similar to modern printed circuit boards, known as the subtractive method, which removes unwanted metal; whereas Charles Ducas and Kiyoshi Miyamoto’s methods are known as additive methods, which only add necessary wiring.

Paul Eisler is also known as the “Father of Printed Circuits, but due to the large heat generation and bulky size of electron tube components at the time, it was inconvenient to install on printed circuit boards. Eisler’s significant invention was not given much attention in the UK at the time, and in the US, PCB manufacturing technology was primarily applied to military products.

By 1942, Dr. Paul Eisler continued to improve his PCB production methods, inventing the world’s first practical double-sided PCB, which was officially produced by Pye Company. This patent application was approved in 1943.

Around 1943, the US began to use Paul Eisler’s technology on a large scale to manufacture proximity fuses for use in World War II and extensively in military radios.

During World War II, PCBs began to be used in proximity fuses.

In 1947, epoxy resin began to be used as a substrate for manufacturing. At the same time, NBS began researching the use of printed circuit technology to form coils, capacitors, resistors, and other manufacturing techniques.

In 1948, the US officially recognized the invention of printed circuit boards for commercial use.

However, in the 1950s, electronic devices using PCBs were still rare. For example, the Motorola television produced in 1948 still did not feature PCBs. Goodness, it was filled with electron tubes. If you had to repair this machine back then, you would have been completely lost. If you now own a working Motorola tube television, it would be worth a fortune. Only wealthy people could afford a television in 1948.

The Spring of Printed Circuit Boards

From the 1950s to the 1990s, this was the stage of formation and rapid growth of the PCB industry, marking the early stage of PCB industrialization, during which PCBs became an industry.

After 1948, the US officially recognized the invention of PCBs for commercial use, indicating the large-scale commercial application of PCBs began to shift from military to civilian use.

With the development of electronic technology, in December 1947, the research team of Shockley, Bardeen, and Brattain at Bell Labs developed the transistor, which, being smaller and generating less heat, began to replace electron tubes in large quantities starting in the 1950s, thus creating favorable conditions for the widespread use of printed circuit board technology.

In 1950, Japanese companies attempted to use silver as a conductor on glass substrates and copper foil on phenolic resin paper substrates. From 1950 onwards, the manufacturing technology of printed circuits began to gain widespread acceptance, with etching playing a dominant role. As transistors became practical, single-sided PCBs produced by metal foil etching were successfully developed in the US and quickly applied industrially.

In 1951, polyimide materials were born.

In 1953, Motorola developed a double-sided board using a plated through-hole method. Around 1955, Toshiba in Japan introduced a technology that generated copper oxide on copper foil surfaces, leading to the emergence of copper-clad laminates (CCL). Both technologies were later widely used in the manufacture of multilayer printed circuit boards, facilitating the emergence of multilayer PCBs.

Ten years after the widespread use of printed circuit boards, by the 1960s, PCB technology had matured. Since Motorola’s double-sided board was introduced, multilayer printed circuit boards began to appear, increasing the ratio of wiring to substrate area.

The image shows the “Digital Laboratory Module” launched by DEC in the 1950s.

Printed Circuit Boards Have Become Indispensable

In 1958, Fairchild Semiconductor’s Robert Noyce and Texas Instruments’ Jack Kilby invented the integrated circuit within months of each other, marking the beginning of microelectronics history.

In the 1960s, multilayer (4+ layers) PCBs began production, and plated through-hole double-sided PCBs achieved mass production.

In 1964, Gordon Moore proposed Moore’s Law, predicting that the integration of transistors would double every 18 months.

In 1971, Intel launched the 1kb dynamic random-access memory (DRAM), marking the emergence of large-scale integrated circuits.

In 1971, the world’s first microprocessor, the Intel 4004, was launched, adopting MOS technology, a milestone invention.

With the large-scale application of integrated circuits, if electronic products were not manufactured using printed circuit boards, it would lead to significant production issues.

In the 1970s, multilayer PCBs rapidly developed, continuously moving towards high precision, high density, fine lines, small holes, high reliability, low cost, and automated continuous production to keep pace with Moore’s Law.

Although multilayer PCBs began to develop rapidly starting in the 1970s, PCB design work was still done manually at that time.

PCB layout engineers would use colored pencils and rulers to draw circuits on transparent polyester film sheets, and to improve drawing efficiency, templates for common component packages and circuit templates were available.

In the 1980s, surface mount technology began to gradually replace through-hole mounting technology as the mainstream, entering the digital age. The development of electronic devices such as personal computers, optical discs, cameras, game consoles, and portable music players has significantly changed our media consumption methods.

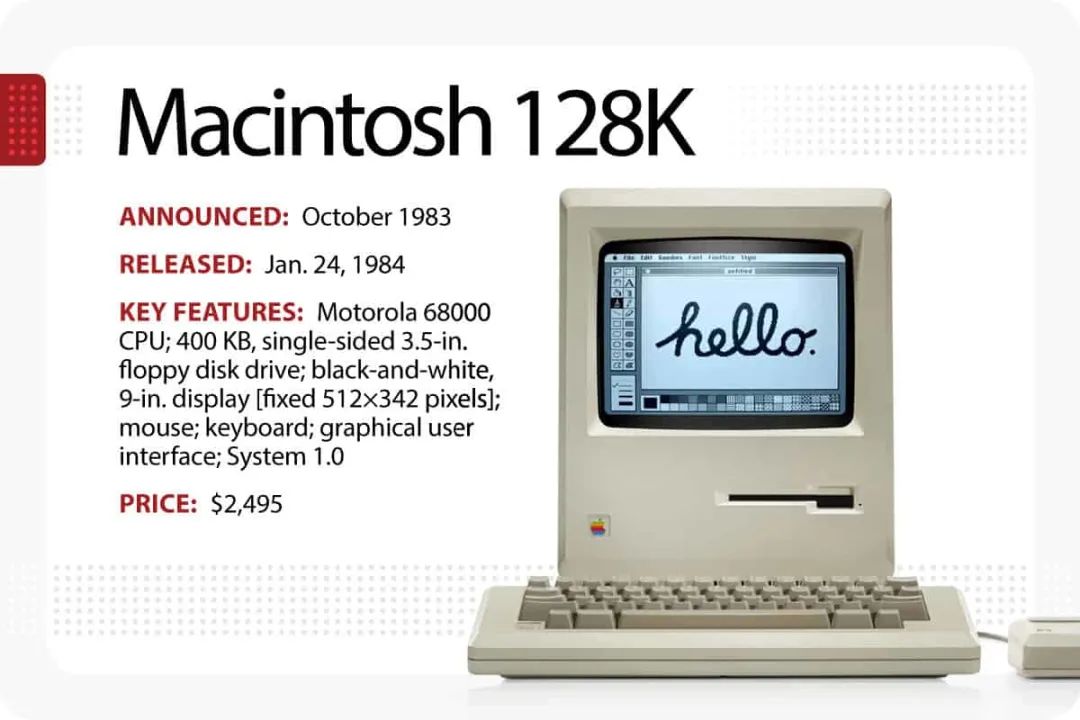

In 1981, Microsoft released the MS-DOS 1.0 system, and in 1984, Jobs’ Apple released the Macintosh computer (now commonly referred to as Mac). In 1984, Lenovo was founded and began assembling personal computers, leading to the popularization of personal computers. CAD software began to emerge and rapidly develop.

The emergence of CAD software improved the drawing efficiency of designers and increased the reusability of PCB designs, saving time on repetitive designs. After PCB design completion, Gerber files could be directly exported to photoplotters. At the same time, PCB manufacturing began to adopt mechanical methods to replace manual labor, greatly improving PCB production efficiency. Previously, PCBs that took weeks to deliver could now be delivered within a few hours, leading to the emergence of quick-turn PCB manufacturers.

From the 1990s to Present, the PCB Industry Has Matured

In 1993, Motorola’s Paul T. Lin applied for a patent for a packaging technology called BGA (Ball Grid Array), marking the beginning of organic packaging substrates.

In 1995, Panasonic developed the BUM PCB manufacturing technology with ALIVH (Any Layer Via Hole) structure, indicating that PCBs had entered the era of high-density interconnection (HDI).

In the early 2000s, PCBs became smaller and more complex. 5-6 mil line widths/spacing became standard, and high-end PCB manufacturers began producing circuit boards with 3.5-4.5 mil line widths/spacing.

At the same time, flexible PCBs became more common.

In 2006, the Every Layer Interconnection (ELIC) process was developed, which uses stacked copper to fill micro-holes to connect every layer of the circuit board. This unique process allows developers to establish connections between any two layers of the PCB. Although this process improves flexibility, allowing designers to maximize interconnect density, ELIC PCBs were not widely used until the 2010s.

With the development of smartphones, driving the development of HDI PCB technology

The evolution of smartphones

With the development of smartphones, in the early 21st century, the second generation of HDI emerged. Retaining laser-drilled micro-vias, stacked vias began to replace staggered vias, and combined with “any layer” construction technology, the final line width/spacing of HDI boards reached 40μm.

This method is still based on the subtractive process, and it is certain that for mobile electronic products, most high-end HDI still adopts this technology. However, in 2017, HDI began to enter a new stage of development, shifting from subtractive processes to graphic plating processes.

For example, in a 0.3 mm pitch BGA design, two traces must pass between BGA pads, with via sizes typically being 75 microns and pad sizes being 150 microns. Layout design requires 30 µm/30 µm line width/spacing. Achieving such fine-line structures with existing subtractive processes is quite challenging. Etching capability is one of the key factors, where the thickness of finished copper and plating uniformity need to be optimized alongside imaging processes. This is why the PCB industry is now adopting mSAP processes, which can easily produce lines with optimized conductor shapes across the entire PCB panel, where the width of the line end is almost equal to the width of the end face at the edge of the PCB – the shape of the lines is easy to control. Another advantage of mSAP is that it utilizes existing resources and technologies, using standard PCB processes such as drilling and plating, and employs traditional materials, ensuring good adhesion between copper and dielectric layers, guaranteeing high reliability of the final product.

Next Generation HDI Processes

Due to miniaturization, HDI and microvias have provided significant momentum for high density. These technologies will continue to evolve along with the geometric shapes of IC units, becoming smaller. Thus, the next revolution will be in the field of optical conductors.

As the technology of large-scale integrated circuits continues to improve, the performance of computer system processors increases. However, current electronic computers still use traditional copper wires to achieve connections between chips – chip to chip, processor to processor, and circuit board to circuit board. The International Technology Roadmap for Semiconductors (ITRS) has indicated that the future electronic systems will be limited by interconnections between chips, as the copper wires currently used face major issues:

(1) High-speed signal distortion and limited bandwidth;

(2) Transmission losses of metal wires increase with the frequency of signals, limiting the transmission distance of high-frequency signals;

(3) Susceptibility to electromagnetic interference;

(4) High power consumption, etc.

Optical communication offers many advantages that traditional electrical signals do not possess, such as high bandwidth, low loss, no crosstalk, and resistance to electromagnetic interference. In fact, fiber optics have completely replaced traditional copper wires for long-distance communication for decades. The future trend is that the communication distance of optical interconnects will gradually shorten, transitioning from long-distance communication between countries to future signal transmission within chips.

Currently, the industry widely believes that when the single-channel rate exceeds 25 Gb/s, both from a technical and cost perspective, electrical interconnects will face tremendous challenges. Therefore, to overcome the “bottleneck” of electronic computers, it is essential to change the traditional copper wire-based interconnection methods and introduce optical technology into electronic systems, replacing traditional electrical interconnects with new optical interconnects to significantly enhance the operating speed of computers and promote the development of high-speed information communication networks, thus meeting the needs of societal development.