0. Introduction

For unmanned vehicles and intelligent robots, the extrinsic calibration between various sensors during assembly has always been a challenging issue. The author has systematically studied the problems of sensor extrinsic calibration and online calibration.

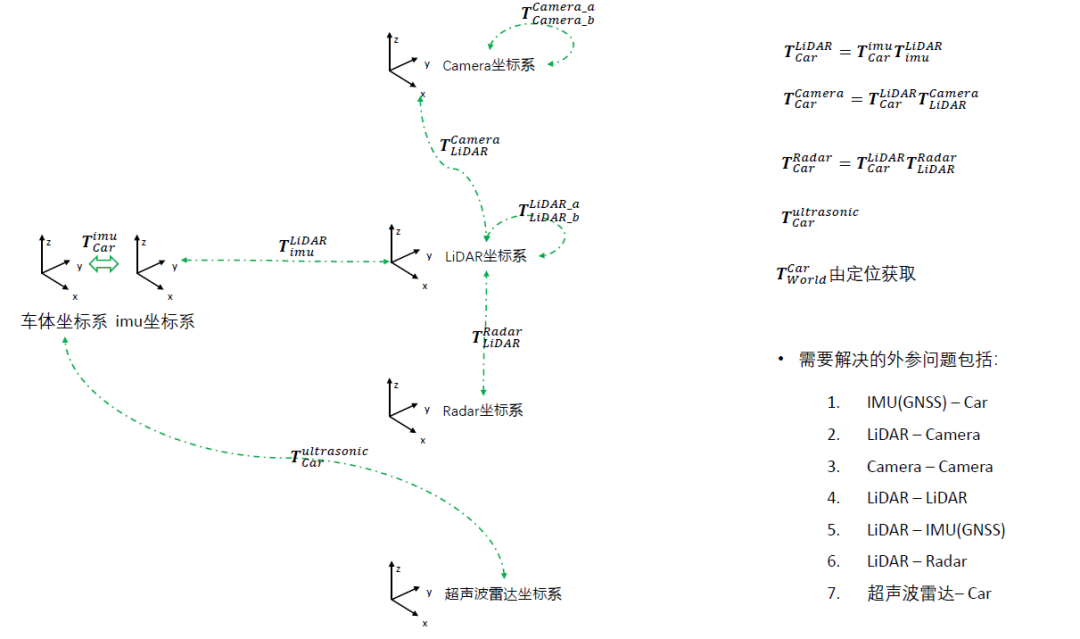

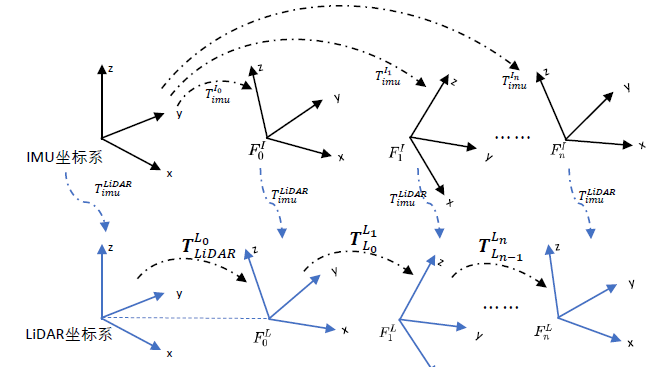

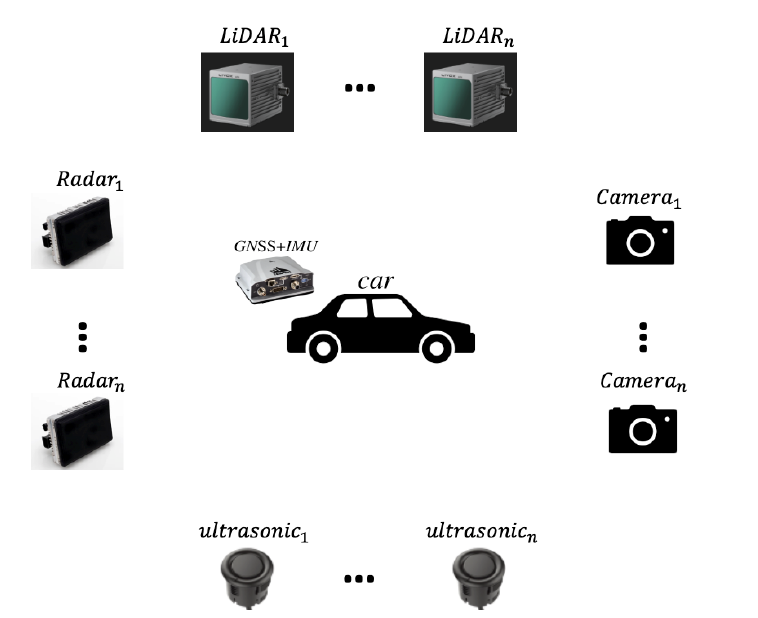

The following figure shows several commonly used coordinate systems, and the common extrinsic calibration issues often involve the extrinsic parameters between IMU/GNSS and the vehicle body, Lidar and Camera, Lidar and Lidar, and Lidar and IMU/GNSS.

1. Offline Extrinsic Calibration

1.1 IMU/GNSS and Vehicle Body Extrinsic Calibration

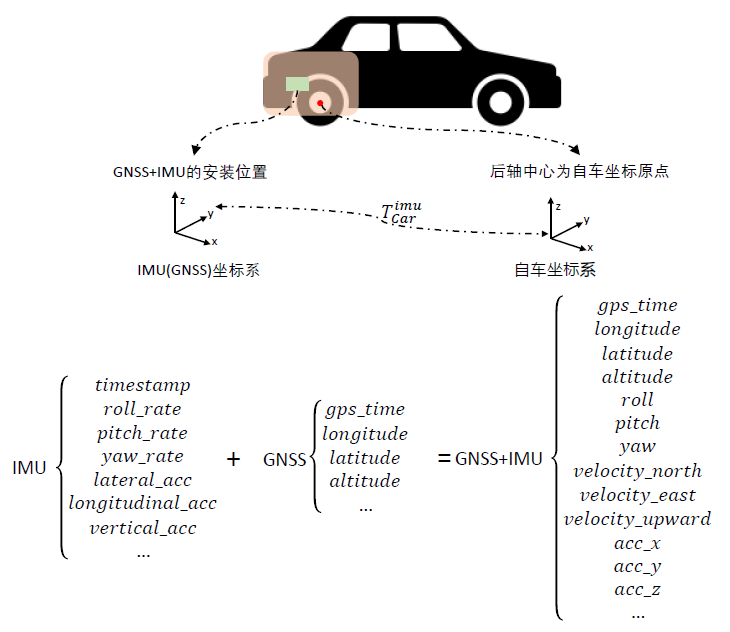

The extrinsic calibration of IMU/GNSS and the vehicle body is shown in the figure below, where the main goal is to obtain the $T_{car}^{imu}$ coordinate system. This type of IMU/GNSS device can output a series of calibrated pose information through internal tight coupling.

Since the output frequency of the IMU is very high, interpolation can effectively improve the overall integrated frequency output.

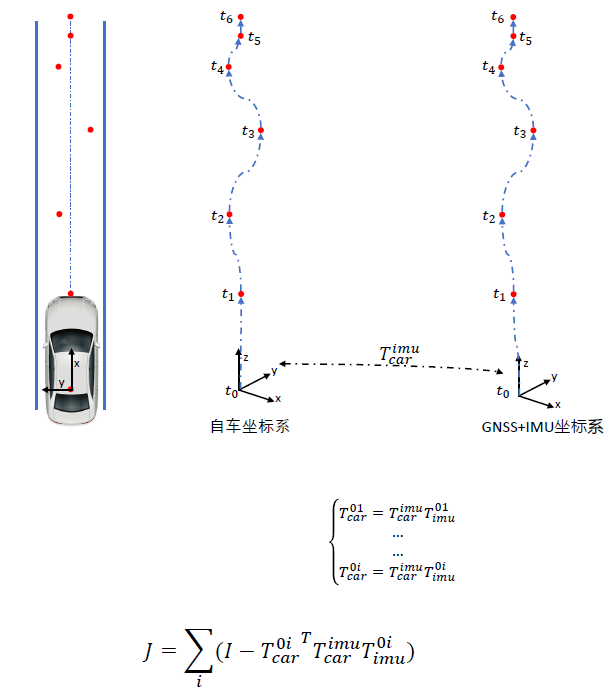

To calibrate the extrinsic parameters, the general method is to obtain a sequence of poses during motion and observe the vehicle’s movement through GNSS/IMU (sometimes we can match the vehicle’s own coordinates with GNSS coordinates by circling and handheld point surveying).

By obtaining many observations and GNSS transformations, we can derive a cost function to optimize.

Here we also provide relevant code for joint correction of the pose based on the vehicle coordinate system odom and IMU. Considering that the data collection process of IMU and wheel speed sensors is inherently difficult to align in time, we introduce a time offset (delta_t) to represent the error between the collection time slices of the two. By iteratively calibrating the newly generated wheel speed data with the original IMU data, we select the result with the smallest error as the final calibration between the two, and the corresponding (delta_t) is considered the deviation in collection time between the two.

Reference link:

https://github.com/smallsunsun1/imu_veh_calib

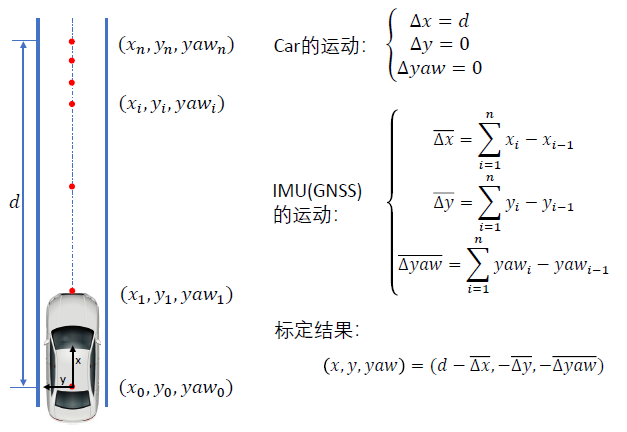

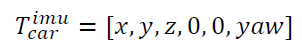

In fact, for vehicles, after planar measurement, it is sufficient to observe $x, y, yaw$. Therefore, constraints and calibrations can be made using straight lines to obtain the following formula:

1.2 Camera to Camera Extrinsic Calibration

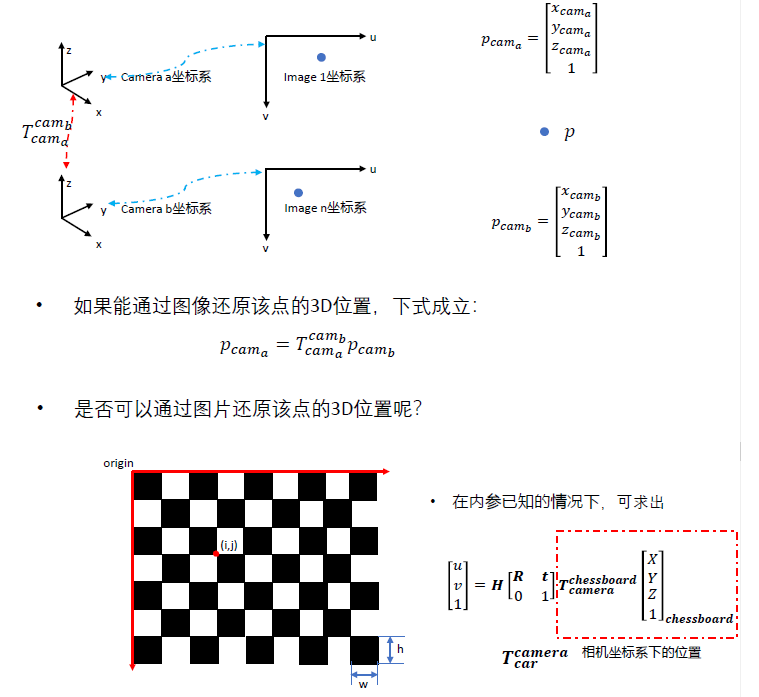

The calibration between cameras is essentially similar to the calibration steps of stereo cameras, where the obtained image information is used to restore the position of points in 3D space. If the transformation relationship between two cameras and points is obtained, the coordinate transformation $T_{cam_a}^{cam_b}$ can be derived.

Then, based on the coordinate transformation of the checkerboard, we can obtain the situation of the same point in the $uv$ coordinate system after applying intrinsic and extrinsic parameters, and use PNP nonlinear optimization to obtain the rotation and translation matrix $T_{camera}^{chessboard}$. Then, multiple frames can be used to comprehensively constrain $T_{cam_a}^{cam_b}$.

This is a commonly used method, and OpenCV also has built-in methods. The code is as follows:

Reference link:

https://github.com/sourishg/stereo-calibration

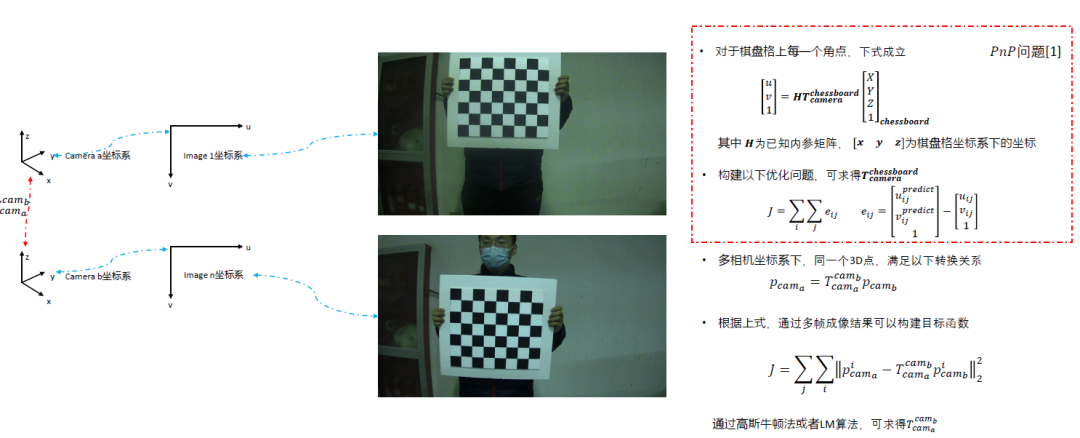

1.3 Lidar to Camera Extrinsic Calibration

The calibration between Lidar and camera is one of the most important parts of autonomous driving, mainly involving the 3D pose estimation of Lidar points and the 3D pose estimation of pixel points from the camera.

Here we can see that the core idea is still to obtain the 3D points to complete the correspondence. However, there may be errors in corner point extraction due to scanning by Lidar, which can lead to inaccuracies, for example, using this method.

It can be seen that different lasers yield different corner point extraction results, so we can implement corner point fitting methods. For instance, we can use RANSAC to extract the position area of the calibration board in space and obtain the initial displacement, then identify several protrusions on the calibration board and extract the center points of the protrusions through segmentation and clustering methods, mapping the points to the nearest points to obtain $T_{lidar}^{chessboard}$.

Then the camera can estimate $T_{camera}^{chessboard}$ using the calibration board, and the rotation TF coordinate system can be obtained from the Lidar and Camera through the $chessboard$.

Reference links:

1. 2D calibration board:

https://github.com/TurtleZhong/camera_lidar_calibration_v2

2. 3D calibration board:

https://github.com/heethesh/lidar_camera_calibration

3. Hollow calibration board:

https://github.com/beltransen/velo2cam_calibration

4. Sphere calibration:

https://github.com/545907361/lidar_camera_offline_calibration

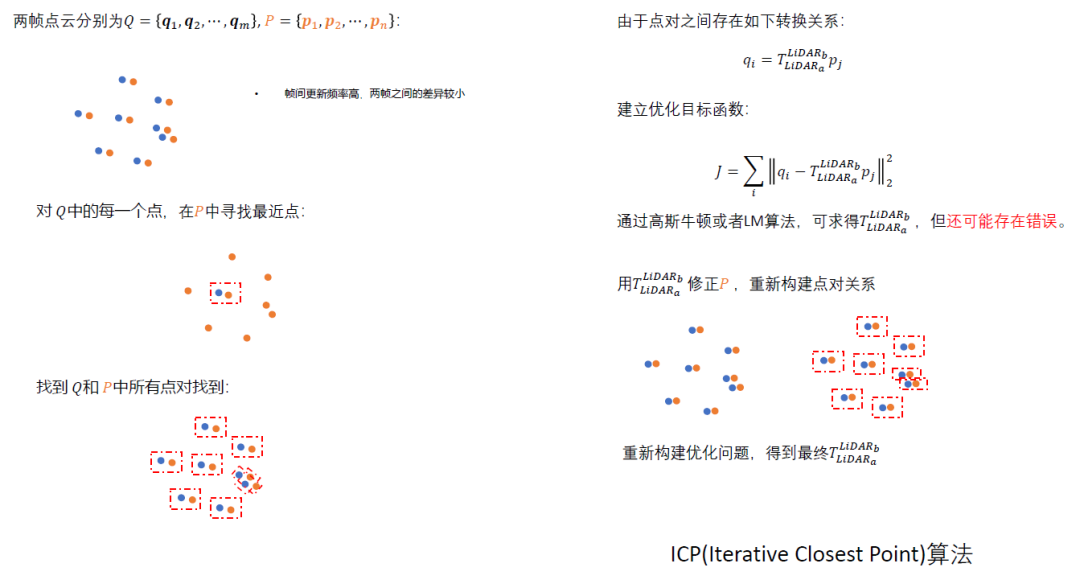

1.4 Lidar to Lidar Extrinsic Calibration

The calibration between Lidar and Lidar is essentially a method of calibrating and pairing two point clouds, generally using the PCL library. This has been detailed in previous blogs, so it will not be elaborated here.

Reference links:

2D Lidar:

https://hermit.blog.csdn.net/article/details/120726065

https://github.com/ram-lab/lidar_appearance_calibration

3D Lidar:

https://github.com/AbangLZU/multi_lidar_calibration

https://github.com/yinwu33/multi_lidar_calibration

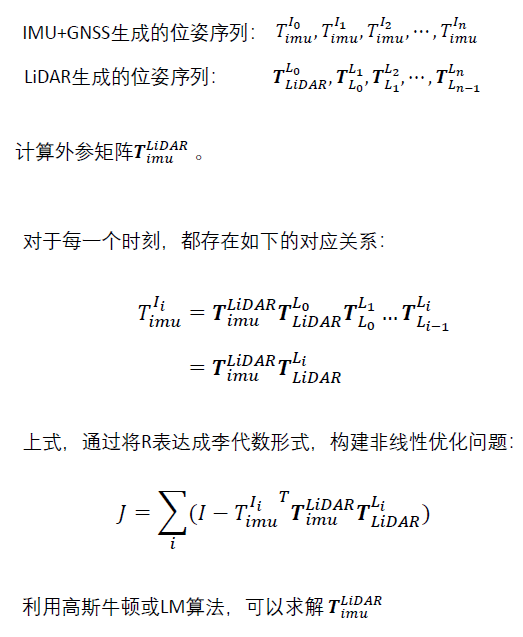

1.5 Lidar to IMU/GNSS Extrinsic Calibration

The extrinsic calibration of Lidar and IMU/GNSS is similar to the extrinsic calibration of the vehicle body, where relative displacement can be used to estimate pose changes for alignment.

In recent years, there have also been some good open-source solutions for us to reference and choose:

Reference links:

https://github.com/APRIL-ZJU/lidar_IMU_calib

https://github.com/chennuo0125-HIT/lidar_imu_calib

https://github.com/FENGChenxi0823/SensorCalibration.

1.6 Lidar and Radar Extrinsic Calibration

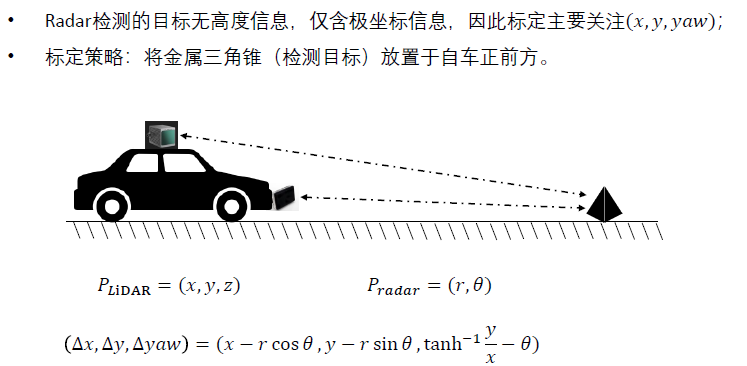

Unlike others, Radar only has polar coordinate information and lacks height information. Therefore, in many cases, the calibration of Radar and Lidar only requires the calibration of $x, y, yaw$ information.

Radar is more sensitive to triangular cone markings, which can lead to more accurate results.

Some registration methods can also be used to achieve calibration functionality.

Reference links:

https://github.com/keenan-burnett/radar_to_lidar_calib

https://github.com/gloryhry/radar_lidar_static_calibration

1.7 Data Synchronization

Data synchronization is a necessary step after the extrinsic calibration of all sensors. The author has also written multiple articles on this aspect of work (https://hermit.blog.csdn.net/article/details/120489694), and here is an open-source solution found (https://github.com/lovelyyoshino/sync_gps_lidar_imu_cam), which is also included.

2. Online Extrinsic Calibration

During vehicle operation, dynamically correcting the relative pose parameters between sensors. Unlike offline calibration, online calibration cannot set up scenes (such as calibration boards), making it more challenging. This is because the installation positions of sensors may change due to vibrations or external collisions during vehicle operation, which can trigger alarms when parameters are abnormal.

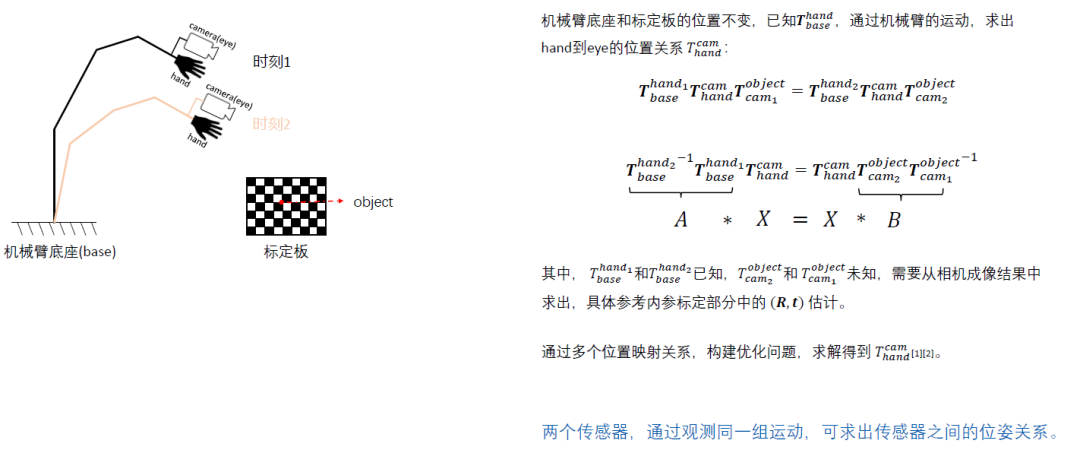

2.1 Hand-Eye Calibration

The work on hand-eye calibration has also been discussed by the author in previous articles, estimating the current situation by forming the equation $AX=XB$. Here is the hand-eye calibration scheme for Lidar and RTK (https://github.com/liyangSKD/lidar_rtk_calibration).

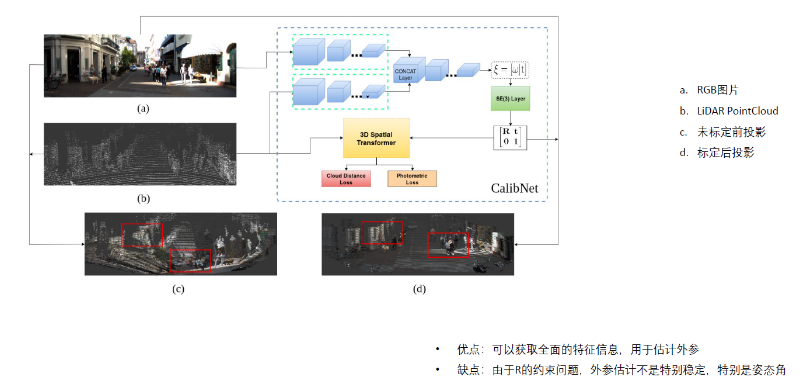

2.2 Deep Learning Methods

This type of method is expected to be a trend in the future, estimating the optimal projection situation based on the input conditions through deep learning outputs. The author has not conducted in-depth research on this yet and will discuss it in detail when time permits.

https://github.com/gogojjh/M-LOAMhttps://github.com/KleinYuan/RGGNet

↑ Hot course, limited time coupon! 🎉 Grab it now ↑