1. CPU Performance and Architecture Analysis

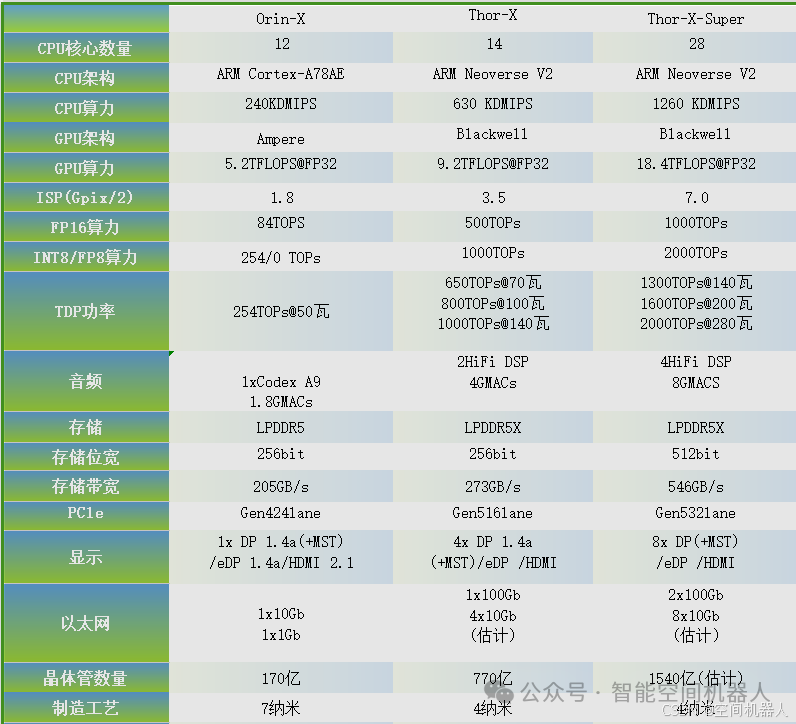

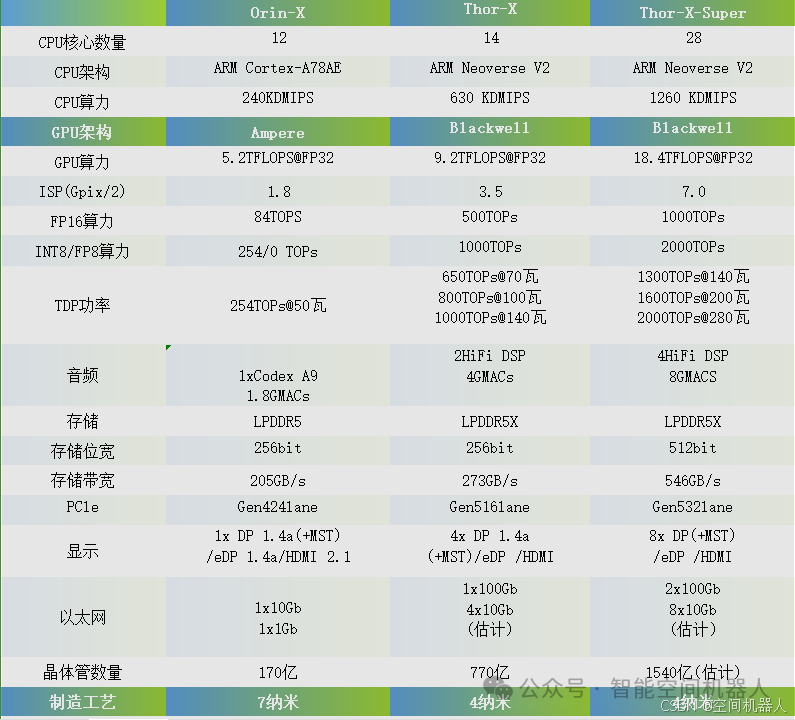

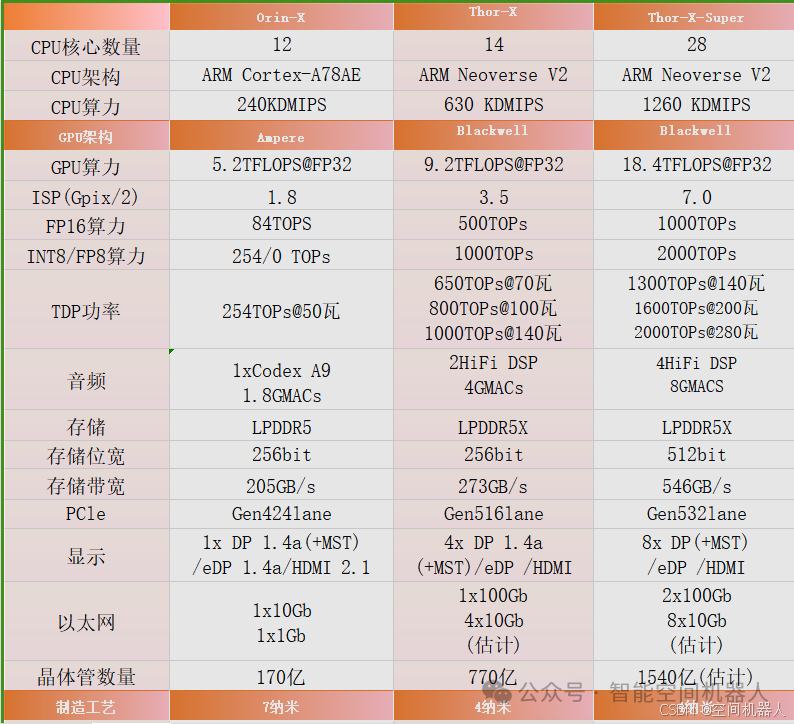

From the table information, these three chips adopt different CPU architectures and core counts:

-

Orin-X

: 12 cores, ARM Cortex-A78AE architecture, performance of 240 KDMIPS. -

Thor-X

: 14 cores, ARM Neoverse V2 architecture, performance of 630 KDMIPS. -

Thor-X-Super

: 28 cores, ARM Neoverse V2 architecture, performance improved to 1260 KDMIPS.

Technical Features Analysis

Cortex-A78AE Architecture

-

Cortex-A78AE is designed specifically for automotive electronics and high safety requirements, supporting lock-step execution mode to enhance safety. -

Compared to the standard Cortex-A series, A78AE places greater emphasis on real-time and determinism in task processing.

Neoverse V2 Architecture

-

Neoverse V2 is optimized for data centers and high-performance computing, supporting higher parallel processing capabilities. -

Compared to A78AE, Neoverse V2 has stronger per-core computing power and better power performance ratio.

Core Count and Performance Comparison

Increasing the core count is a direct means to enhance computing power, but attention must be paid to memory bandwidth and task scheduling bottlenecks.

-

Orin-X

is suitable for lower power consumption task scenarios, such as ADAS (Advanced Driver Assistance Systems). -

Thor-X

is suitable for multi-task processing environments, such as in-vehicle domain controllers. -

Thor-X-Super

is more suitable for complex scenarios, such as centralized computing needs in autonomous driving systems.

How to Choose

-

If focusing on real-time and high safety, Orin-X is the ideal choice. -

If the task complexity is high and performance requirements are stringent, Thor-X or Thor-X-Super are more suitable. -

Within budget constraints, prioritize Thor-X-Super, as its high core count and powerful Neoverse V2 architecture can significantly enhance system redundancy and processing capability.

Current Bottlenecks and Improvement Directions

The current ARM architecture bottlenecks in complex computing scenarios mainly manifest in the following aspects:

-

Memory Bandwidth Limitations

: As core counts increase, the bottleneck of the memory subsystem becomes increasingly significant.

-

Improvement direction: Adopt wider memory bus widths (such as the 512-bit width of Thor-X-Super) and high-speed cache coherence protocols.

Power Consumption Optimization Difficulty

-

Improvement direction: Introduce more efficient power management mechanisms, such as DVFS (Dynamic Voltage and Frequency Scaling).

2. GPU Performance and Application Scenarios

GPU parameters show:

-

Orin-X

: Ampere architecture, 5.2 TFLOPS (FP32 computing power). -

Thor-X

: Blackwell architecture, 9.2 TFLOPS (FP32 computing power). -

Thor-X-Super

: Blackwell architecture, 18.4 TFLOPS (FP32 computing power).

Technical Features Analysis

Ampere Architecture

-

Ampere is NVIDIA’s earlier GPU architecture, focusing on graphics rendering and some AI inference tasks. -

FP32 computing power is relatively low, suitable for medium to low complexity tasks.

Blackwell Architecture

-

Blackwell is the latest generation architecture, significantly improving energy efficiency and AI computing performance compared to Ampere architecture. -

Supports higher INT8/FP8 computing power, making it more suitable for deep learning inference tasks in autonomous driving.

Application Scenarios

-

Orin-X

: Suitable for medium complexity AI tasks, such as driver monitoring and road sign recognition. -

Thor-X

: More suitable for multi-camera scenarios, such as multi-target tracking and 3D environmental perception. -

Thor-X-Super

: Suitable for fully autonomous driving systems, capable of handling high complexity AI tasks, such as multi-modal fusion and real-time decision-making.

Current Bottlenecks and Improvement Directions

-

Insufficient Storage Bandwidth

: The GPU’s powerful computing capabilities require high-speed memory bandwidth support.

-

Improvement direction: Use HBM (High Bandwidth Memory) or further increase the frequency of LPDDR5X.

Computing Power Utilization

-

Improvement direction: Optimize software algorithms to fully utilize hardware resources.

3. Storage System Design and Selection Recommendations

Storage parameters show:

-

Orin-X

: LPDDR5, 256-bit width, bandwidth of 205GB/s. -

Thor-X

: LPDDR5X, 256-bit width, bandwidth of 273GB/s. -

Thor-X-Super

: LPDDR5X, 512-bit width, bandwidth of 546GB/s.

Technical Features Analysis

LPDDR5 vs LPDDR5X

-

LPDDR5X further enhances data transfer rates and power performance based on LPDDR5. -

Bandwidth improvement is significant, especially suitable for AI computing scenarios with high data throughput requirements.

Bit Width and Bandwidth

Increasing bit width and bandwidth is crucial for enhancing performance in AI and GPU tasks.

-

Thor-X-Super adopts a 512-bit width design, achieving a storage bandwidth of 546GB/s, meeting high computing power needs.

How to Choose

-

Orin-X

is suitable for scenarios with low bandwidth requirements, such as single sensor processing. -

Thor-X

is suitable for medium complexity applications, with slightly redundant bandwidth. -

Thor-X-Super

performs best in complex AI tasks, but attention must be paid to balancing cost and power consumption.

Current Bottlenecks and Improvement Directions

-

Energy Efficiency Optimization

: High bandwidth designs usually come with high power consumption.

-

Improvement direction: Optimize circuit design and adopt more advanced low-power technologies.

Memory Compatibility Issues

-

Improvement direction: Ensure system stability through simulation and testing.

4. Power Consumption and Thermal Optimization

TDP (Thermal Design Power) shows:

-

Orin-X: 50 watts. -

Thor-X: 70 to 140 watts. -

Thor-X-Super: 140 to 280 watts.

Power Consumption Design Challenges

As computing power increases, power consumption rises sharply, imposing higher demands on thermal design.

-

Thermal Design

: Efficient cooling solutions are needed, such as liquid cooling or heat pipe technology. -

Power Supply Design

: High power consumption chips pose challenges for the transient response of power supplies.

Improvement Directions

-

Introduce advanced power regulation technologies, such as multi-phase power supply and dynamic voltage adjustments. -

Use high thermal conductivity materials to enhance cooling efficiency.

5. Interface Expansion and System Integration

Interface Expansion Design

Each chip supports various high-performance interfaces:

-

Orin-X

: Supports PCIe 4.0, with sufficient bandwidth but limited number of interfaces. -

Thor-X

andThor-X-Super: Support PCIe 5.0, offering higher bandwidth and more interface counts, suitable for large-scale data throughput applications.

Application Scenario Analysis

-

Orin-X

: Suitable for applications with limited interface expansion, such as processing single ADAS camera inputs. -

Thor-X

: Performs excellently in vehicle domain controllers, capable of connecting multiple sensors and external storage devices. -

Thor-X-Super

: Suitable for systems requiring large-scale data interaction, such as fully autonomous driving domain controllers.

Current Bottlenecks and Improvement Directions

-

PCIe Interface Bottlenecks

: In high-load scenarios with multiple devices, congestion in PCIe links may affect performance.

-

Improvement direction: Increase interface channels or introduce CXL (Compute Express Link) technology to enhance data throughput capabilities.

Compatibility Issues

-

Improvement direction: Optimize hardware driver and middleware design.

6. Manufacturing Process and Reliability

Manufacturing Process

From the image, it can be inferred that these chips all use advanced 5nm process technology:

-

Power Consumption Reduction

: Smaller process technology significantly reduces dynamic power consumption. -

Performance Improvement

: Increased transistor density leads to higher computing capability.

Reliability Design

Automotive-grade chips must meet AEC-Q100 certification standards to ensure stability in harsh environments.

Current Technical Bottlenecks and Improvement Directions

-

Thermal Reliability

: High-density transistors from small process technologies can easily generate hotspots.

-

Improvement direction: Optimize the thermal distribution inside and outside the chip through thermal simulation.

Manufacturing Yield

-

Improvement direction: Enhance yield through chip testing technologies, such as Built-In Self-Test (BIST).

7. Technical Bottlenecks and Future Development Directions

Technical Bottlenecks

-

Increasing Demand for Computing Power

: AI and autonomous driving continuously elevate the demand for computing power, but enhancing a single chip faces bottlenecks. -

Power Consumption and Thermal Management

: Enhancements in computing power come with increased power consumption, imposing higher demands on thermal design. -

System Integration Complexity

: Integration of multiple sensors and domains poses challenges for both hardware and software.

Future Development Directions

-

Heterogeneous Computing

: Introduce more NPUs (Neural Processing Units) and dedicated AI accelerators to optimize AI task processing. -

3D Packaging Technology

: Improve chip computing power density through stacking designs. -

Collaboration Between Edge Computing and Cloud Computing

: Enhance the real-time processing and efficiency of data.

8. Application Case Analysis

Orin-X Actual Applications

-

Used in L2/L3 level ADAS systems. -

Handles single camera perception tasks, such as lane detection and obstacle recognition.

Thor-X Actual Applications

-

Used in multi-domain controllers, such as driving and parking integration. -

Processes multi-camera and radar data, supporting vehicle environmental perception and path planning.

Thor-X-Super Actual Applications

-

Integrated into L4/L5 fully autonomous vehicles. -

Handles multi-sensor fusion, high-precision map matching, and real-time decision-making.

Conclusion

Orin-X, Thor-X, and Thor-X-Super are aimed at different complexity automotive application scenarios, with their performance, architecture, and interface designs reflecting NVIDIA’s advanced technology in the automotive chip field. Selection should comprehensively consider computing power, bandwidth, power consumption, and cost based on actual application needs. Meanwhile, future technological developments should continuously focus on memory bandwidth optimization, heterogeneous computing architectures, and system reliability enhancement.

1. Detailed Introduction to ARM Cortex-A78AE and ARM Neoverse V2

Cortex-A78AE

-

Architecture Features

: -

Designed specifically for automotive electronics and high safety scenarios. -

Supports lock-step execution mode, suitable for functional safety requirements (such as ISO 26262 standards). -

Equipped with real-time and deterministic task scheduling capabilities. -

Application Scenarios

: -

Autonomous driving domain controllers. -

Real-time decision modules in ADAS systems. -

Ensures reliability and low latency in task execution.

Neoverse V2

-

Architecture Features

: -

Designed for data centers and high-performance computing. -

Provides higher parallel computing capabilities, improving the power efficiency ratio per unit of computing power. -

Supports next-generation interconnect protocols (such as PCIe Gen5, CXL). -

Application Scenarios

: -

Deep learning inference in autonomous driving. -

High-load environmental perception and multi-sensor data fusion. -

High-performance edge computing.

2. CPU Computing Power and Applications

-

Computing Power Introduction:

-

KDMIPS is a unit measuring the number of million instructions executed per second by a CPU. -

Orin-X

(240 KDMIPS) is suitable for medium to low-load tasks. -

Thor-X

(630 KDMIPS) supports complex environmental perception and multi-task scheduling. -

Thor-X-Super

(1260 KDMIPS) is suitable for high-density data processing and centralized computing. -

Application Scenarios:

-

240 KDMIPS

: Single sensor processing (e.g., camera, radar data preprocessing). -

630 KDMIPS

: Supports multi-task operations, such as real-time map reconstruction. -

1260 KDMIPS

: Meets deep learning needs and decision-making computations in autonomous driving systems.

3. GPU Architecture Comparison: Ampere vs Blackwell

Ampere

-

Released in 2020, using TSMC 7nm technology. -

Features

: -

Optimized for graphics rendering performance. -

Strong FP32 computing power (5.2 TFLOPS), but weak in AI performance. -

Suitable for traditional graphics tasks and lightweight AI inference. -

Application Scenarios

: -

Medium to low complexity AI tasks (e.g., driver monitoring, lane detection).

Blackwell

-

Released in 2024, using TSMC 4nm technology. -

Features

: -

Significantly enhances AI inference performance, supporting INT8 and FP8 precision. -

Significantly improves energy efficiency (power consumption per unit of computing power decreases). -

Higher parallel computing capabilities, supporting real-time multi-modal fusion. -

Application Scenarios

: -

High complexity tasks in autonomous driving (e.g., 3D environmental perception, multi-modal data fusion).

4. GPU Computing Power vs ISP Comparison

-

TFLOPS Computing Power:

-

5.2 TFLOPS

: Medium complexity inference. -

9.2 TFLOPS

: Supports multi-camera synchronous computing. -

18.4 TFLOPS

: High complexity fully autonomous driving systems. -

Indicates the ability to execute trillions of floating-point operations per second. -

FP32 (floating-point operations): -

ISP (Image Signal Processor) Capability:

-

1.8 TOPS

: Suitable for single-camera image processing. -

3.5 TOPS

: Supports multi-sensor image fusion. -

7.0 TOPS

: Real-time high-resolution video processing.

5. Precision Analysis: FP16, INT8, FP8

-

FP16 (Half-Precision Floating Point):

-

High precision, suitable for training and inference phases. -

Commonly used in image processing and tasks requiring high precision. -

INT8 (Integer):

-

Excellent performance-to-power ratio, suitable for inference. -

INT8 computing power is commonly used in object detection tasks in autonomous driving. -

FP8:

-

Emerging standard, further reducing computational complexity. -

More efficient in AI edge computing.

6. TDP (Thermal Design Power) Analysis

-

Power Consumption Differences:

-

Orin-X (50W)

: Suitable for low power consumption scenarios. -

Thor-X (70-140W)

: Balances efficiency and power consumption. -

Thor-X-Super (140-280W)

: For high-performance tasks. -

Power Consumption Optimization Directions:

-

Multi-phase power supply design. -

Use liquid cooling technology to reduce thermal bottlenecks.

7. Codex A9 and HIFI DSP Applications

-

Codex A9:

-

Designed for audio and video decoding. -

Supports HEVC, VP9, and other efficient decoding algorithms. -

HIFI DSP:

-

Focuses on audio signal processing. -

Used in speech recognition, echo cancellation, and noise suppression.

8. Storage: LPDDR5 and Bandwidth Bit Width Relationship

-

LPDDR5 Features:

-

Data rate up to 6400 MT/s. -

Lower power consumption, shorter latency. -

Bandwidth and Bit Width:

-

Bandwidth (GB/s) = Data rate (MT/s) × Bit Width (bits) / 8. -

Thor-X-Super

‘s 512-bit width design effectively increases total bandwidth (546 GB/s).

9. Interface Analysis

-

PCIe Gen4 vs Gen5:

-

Gen4: 16 GT/s. -

Gen5: 32 GT/s, doubling the bandwidth. -

DP1.4 vs HDMI2.1:

-

DP1.4: 32.4 Gbps, supports 8K@60Hz. -

HDMI2.1: 48 Gbps, supports higher refresh rates, suitable for high-end display devices.

10. Manufacturing Process: 7nm vs 4nm

-

Differences:

-

7nm: Transistor density of about 160 million/mm². -

4nm: Transistor density increased to 250 million/mm². -

Comparison with a Hair Strand:

-

A human hair is about 100,000 nanometers wide. -

The 4nm process can accommodate about 25,000 layers of transistor structures.