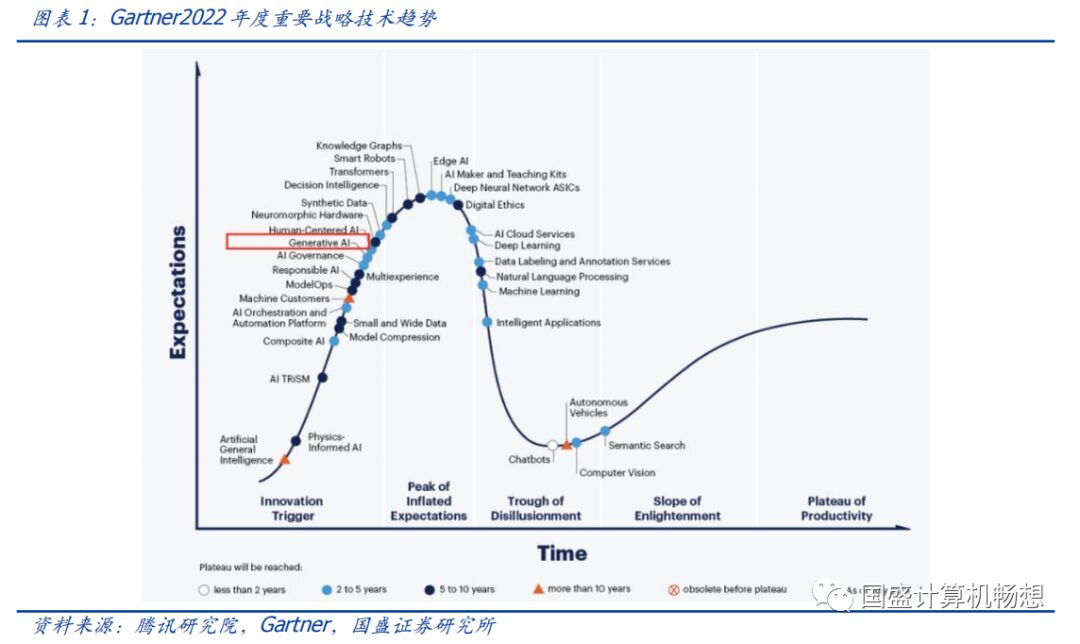

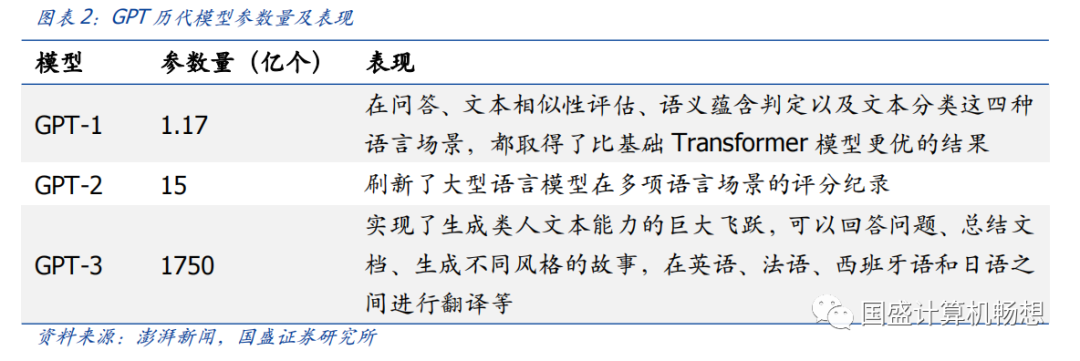

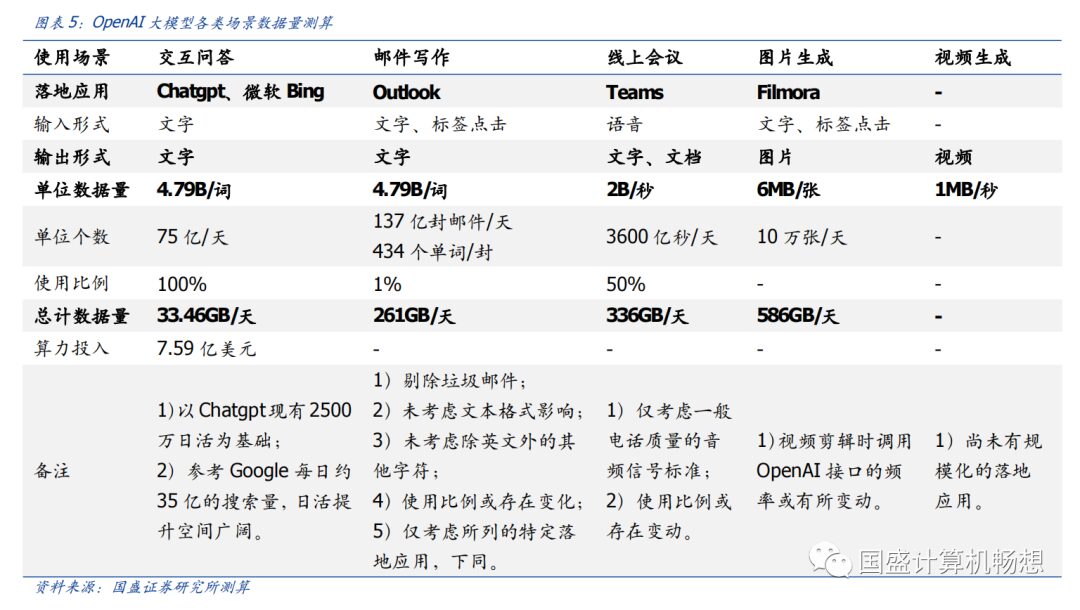

ChatGPT’s large model computational demand is rapidly expanding.1) The large model represented by ChatGPT has seen a significant increase in parameter and data volume, with the GPT-3 model reaching 175 billion parameters, requiring strong computational support for training.2) Currently, Google has a daily search volume of 3.5 billion, and we believe there is significant room for growth in daily active users for ChatGPT, with computational demands likely to continue to be released.3) In the future, under the trend of multimodal applications, a broader range of data forms, more application scenarios, and deeper user experiences will greatly increase the computational demands supporting artificial intelligence, ushering in an era of rapid expansion in computational power.

-

Huawei’s Ascend 910 integer precision performance reaches 640 TOPS, and half precision reaches 320 TFLOPS, comparable to leading international products; its Atlas 300T training card is mainly used in scenarios requiring AI training and high-performance computing, such as telecommunications, internet, and finance; -

Haiguang’s Deep Computing No. 1 DCU has 60-64 computing units, with up to 4096 cores, featuring strong parallel computing capabilities and high energy efficiency, and has achieved large-scale sales; -

Cambricon’s SiYuan 370 chip uses 7nm process technology and chiplet technology, integrating 39 billion transistors, with a maximum computational power of 256TOPS(INT8); -

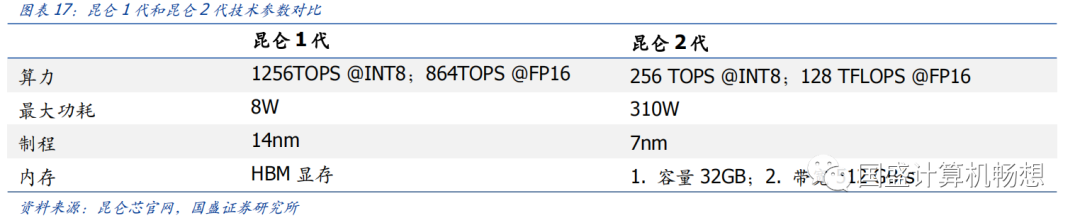

Baidu’s Kunlun 2nd generation AI chip has a general computing core performance improvement of 2-3 times, with half precision reaching 128 TFLOPS, supporting training and inference; -

Jingjiawei’s GPU can be widely used in PCs, servers, graphic workstations, etc., meeting the display computing needs of geographic information systems, image matching, signal processing, and airborne, vehicle-mounted, and shipborne display control.

-

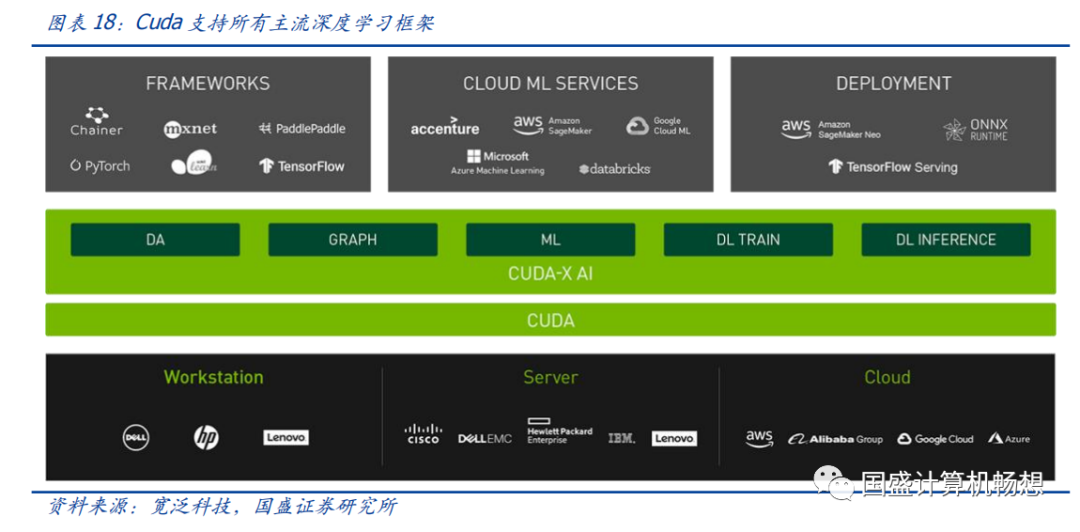

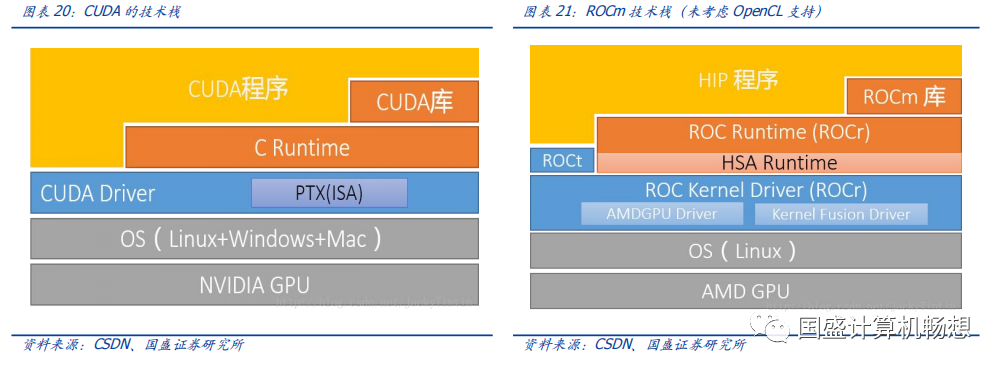

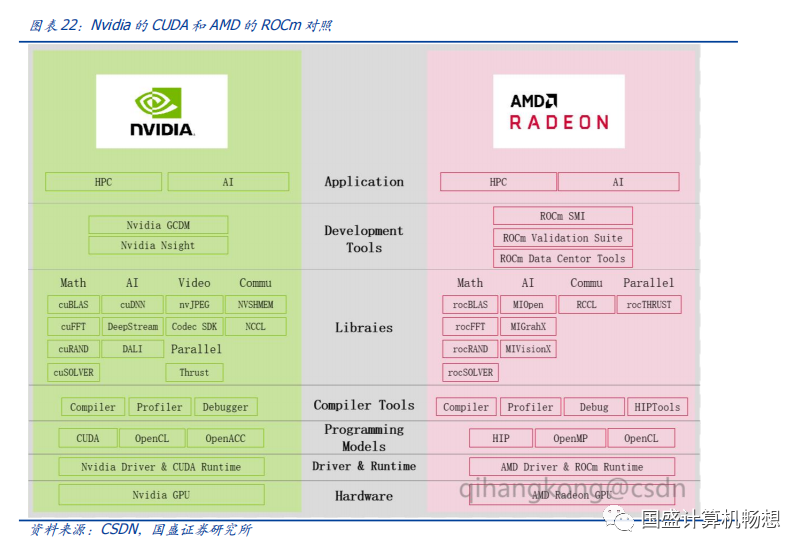

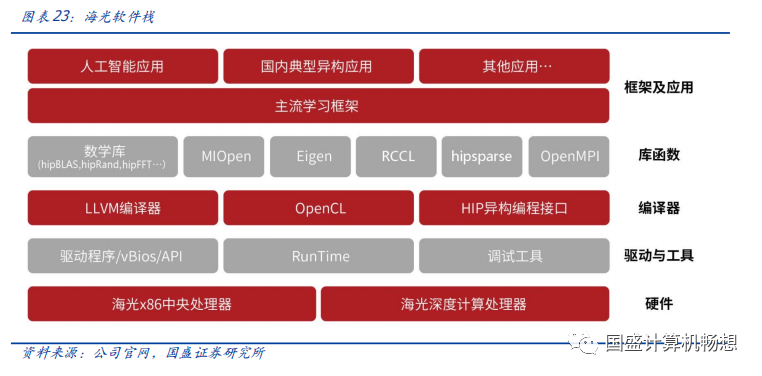

Haiguang ecosystem: The Haiguang DCU co-processor can adapt well to NVIDIA‘s CUDA ecosystem, reducing development and migration difficulties, and also alleviating promotion pressure; it builds a relatively complete AI toolchain ecosystem, maximizing the use of existing mature AI algorithms and frameworks; CPUs and GPGPUs also receive support from mainstream manufacturers across the industry chain, and we recommend paying attention to Haiguang Information, Zhongke Shuguang, etc. -

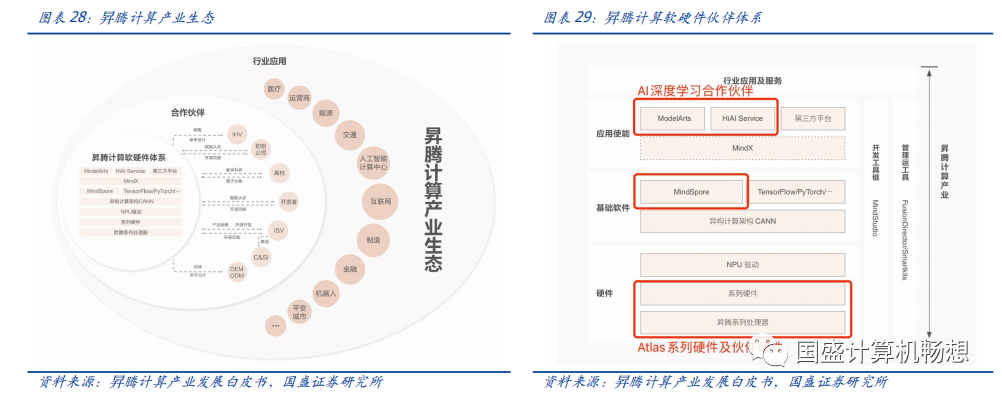

Ascend ecosystem: The Ascend computing industry ecosystem is built on the Ascend series processors and basic software, constructing a full-stack AI computing infrastructure, industry applications, and services. In terms of the software and hardware system, the Atlas hardware, MindSpore framework, and AI development platform form a complete cooperation system; in terms of complete machines, Digital China and Tuowei Information are among Huawei’s first partners in the Ascend computing field; in terms of industry applications, Beiming Software joined the Ascend Wanli Partner Program in 2022, clearly indicating comprehensive cooperation intentions in finance, internet, electricity, etc., and the Ascend computing industry ecosystem is gradually improving. We recommend focusing on Digital China, Tuowei Information, Changshan Beiming, etc.

Based on large models, the parameter and data volumes are rapidly expanding, leading to a sharp increase in computational demand.Under the framework of large models, the parameter volume of each generation of GPT models is rapidly expanding; at the same time, the demand for pre-training data is also increasing rapidly. We believe that the rapid penetration and application of ChatGPT will also significantly boost computational demand.

-

NVIDIA A100: According to OneFlow, currently, NVIDIA A100 is the most cost-effective GPU choice on AWS. -

NVIDIA DGX A100 server: Each machine is equipped with 8 A100 GPUs, with AI computational performance of approximately 5 PetaFLOP/s, and a maximum power of about 6.5kw, priced at about 199,000 USD/machine.

-

Daily Consultation Volume: According to data from Similarweb, as of the end of January 2023, the chat.openai.com website (i.e., the official ChatGPT website) attracted a daily visitor count of up to 25 million during the week of 2023/1/27-2023/2/3. Assuming a stable state where each user asks about 10 questions daily, the daily consultation volume would be approximately 250 million.

-

A100 Running Hours: Assuming each question averages 30 words, each word on the A100 GPU consumes about 350ms, thus a total of 729,167 A100 GPU running hours are needed per day.

-

A100 Demand: Corresponding to the need for 30,382 NVIDIA A100 GPUs to be computed simultaneously each day to meet the current access volume of ChatGPT.

-

Initial Computational Investment: Based on the aforementioned NVIDIA DGX A100, it requires 30,382/8=3,798 servers, corresponding to 3,798/7=542 cabinets. To meet the current consultation volume of millions of users for ChatGPT, the initial computational investment cost is approximately 542*140=759 million USD.

-

Monthly Electricity Costs: In terms of electricity consumption, 542*45.5kw*24h=591,864kwh/day. Referring to Hashrate Index statistics, we assume that the average industrial electricity price in the US is about 0.08 USD/kwh. Thus, the daily electricity cost is approximately 591,864*0.08=47,349 USD/day.

-

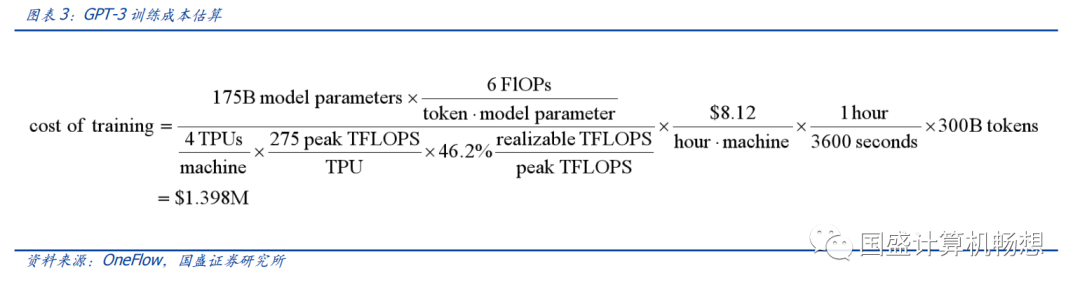

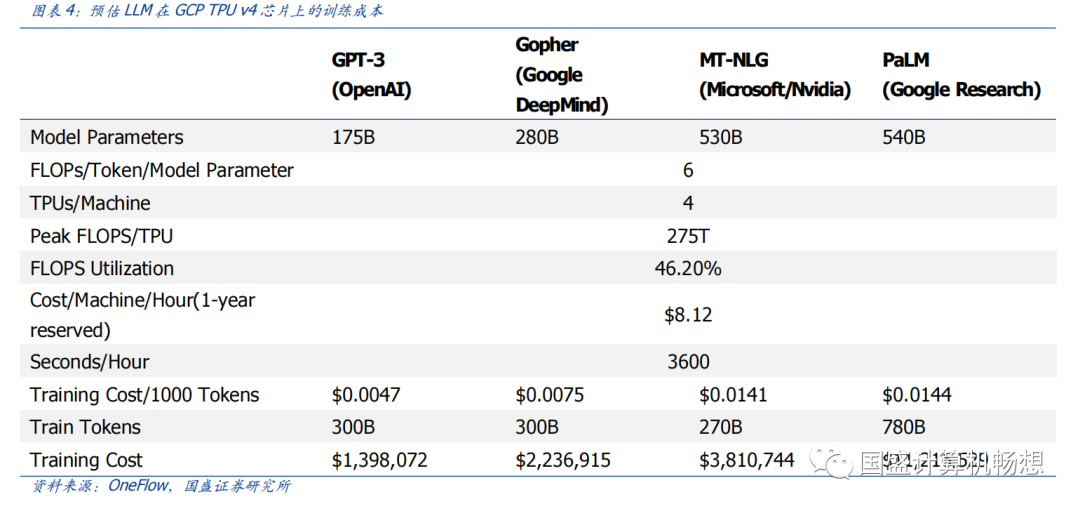

Each token training cost is usually about 6N (while inference cost is about 2N), where N is the number of parameters of the LLM; -

Assuming that during the training process, the model’s FLOPS utilization is 46.2%, consistent with the training of the PaLM model (which has 540 billion parameters) on the TPU v4 chip.

-

According to OneFlow, the cost of training GPT-3 once is approximately 1.398 million USD; for some larger LLM models (such as Gopher with 280 billion parameters and PaLM with 540 billion parameters), using the same calculation formula, the training cost is between 200 thousand to 1.2 million USD.

-

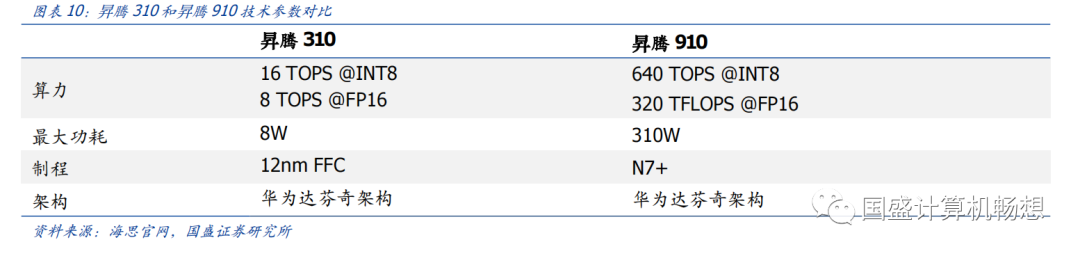

Huawei Ascend (Training+Inference):1) Inference Card: The Ascend 310 chip is Huawei’s first full-stack, all-scenario AI chip, with a power consumption of only 8W, capable of outputting integer precision (INT8) performance of 16 TOPS and half precision (FP16) performance of 8 TOPS; its Atlas 300 inference card is widely used in scenarios such as smart cities, smart transportation, and smart finance.2) Training Card: The Ascend 910 has a power consumption of 310W, with integer precision (INT8) performance reaching 640 TOPS, and half precision (FP16) performance reaching 320 TFLOPS, comparable to leading international products; its Atlas 300T training card is mainly used in fields requiring AI training and high-performance computing, such as telecommunications, internet, and finance.

-

Haiguang Information (Training): The company’s main products include general processors (CPU) and Haiguang co-processors (DCU). The Haiguang DCU corresponds to the Haiguang 8000 series, which is an AI training chip designed and developed by Haiguang. The company started the design of the “Deep Computing No. 1” product in October 2018 and has achieved large-scale sales. This chip is equipped with 60-64 computing units, with a maximum of 4096 cores, featuring strong parallel computing capabilities and high energy efficiency, suitable for compute-intensive applications such as vector and matrix calculations. The Haiguang DCU is compatible with “CUDA-like” (ROCm) environments, with a rich software and hardware ecosystem, and can be widely used in big data processing, artificial intelligence, commercial computing, and other compute-intensive application fields. In January 2020, the company initiated the research and development of the second-generation DCU “Deep Computing No. 2”.

-

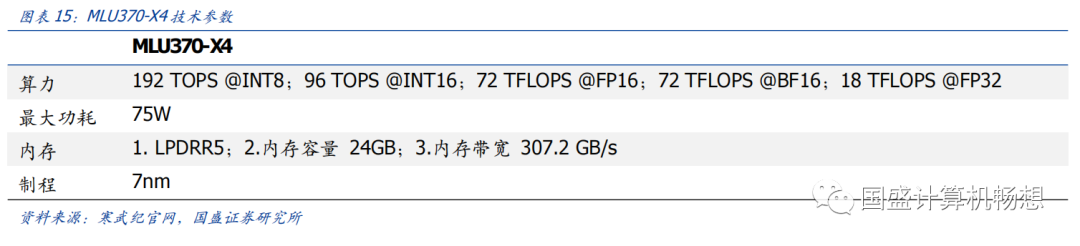

Cambricon (Training+Inference): 1) Integrated Training and Inference: The SiYuan 370 chip is a training and inference AI chip launched by Cambricon, using 7nm process technology and chiplet technology, integrating 39 billion transistors, with a maximum computational power of 256TOPS(INT8), which is 2 times that of the previous generation SiYuan 270 in terms of computational power and 3 times in terms of memory bandwidth.2) Inference Card: Cambricon’s SiYuan 270 is an inference chip capable of processing non-sparse AI models, with peak performance reaching 128TOPS(INT8). SiYuan 270 also supports various precision operations including INT4 and INT16, as well as floating-point and mixed-precision operations. It is suitable for various AI applications, including vision, speech, natural language processing, and machine learning. Additionally, the SiYuan 290 chip is Cambricon’s first AI training chip, integrating 460 billion transistors, with HBM2 memory providing the high memory bandwidth required for AI training, and vMLU technology helping customers achieve cloud virtualization and resource isolation.

-

Baidu Kunlun Chip (Training+ Inference):1) Inference Card: The first and second generation Kunlun AI chips are named K series and R series respectively. Among them, the first generation Kunlun AI chip is a cloud inference chip supporting general AI algorithms. The chip has powerful performance, with integer precision (INT8) reaching 256 TOPS and half precision (FP16) reaching 64 TFLOPS, deployed in thousands of units across Baidu’s search engine, Xiaodu, and other businesses, empowering industries such as internet, industrial manufacturing, smart finance, and smart transportation.2) Integrated Training and Inference: Compared to the first generation product, the Kunlun 2nd generation AI chip has improved general computing core performance by 2-3 times, with half precision (FP16) reaching 128 TFLOPS, supporting both training and inference, providing strong AI computational power for high-performance computing in data centers, supporting virtualization, inter-chip connectivity, and video encoding and decoding.

-

Jingjiawei (Inference): Jingjiawei is a leading enterprise in the domestic high-performance GPU field. The company started developing the first domestically reliable, low-power GPU chip JM5400 in 2014, succeeded in developing the second generation high-reliability, high-performance GPU JM7200 in 2018, which has been widely applied in the market, and completed the third generation product JH920 upgrade by the end of 2021. JH920 is Jingjiawei’s third generation high-performance GPU, with significantly improved performance compared to the previous two generations, mainly used in mid-to-high-end graphic display, general computing, and embedded fields. JH920 fully supports domestic CPUs, domestic operating systems, and domestic firmware, and can be widely applied in PCs, servers, graphic workstations, etc., meeting the display computing needs of geographic information systems, image matching, signal processing, and airborne, vehicle-mounted, and shipborne display control..

3.1Software strengthens GPU competition barriers, improving the ecosystem becomes key to development.

3.2Haiguang Ecosystem: Compatible with international mainstream computing ecosystems, with rich downstream applications.

-

Zhongke Shuguang: As of the third quarter of 2022, Zhongke Shuguang holds 27.96% of Haiguang Information’s shares. Zhongke Shuguang is a leading enterprise in domestic server solutions, with mature server solutions that help Haiguang expand its industry market.

-

Other OEM customer support: Haiguang’s products have received support from many OEM customers such as New H3C, Lenovo, etc., forming a comprehensive and complete machine instance, promoting subsequent customer purchases of the company’s products.

-

Support for mainstream BIOS: Currently, the company’s products support mainstream BIOS manufacturers such as BaiAo, Kunlun, Insyde, etc.

-

In April 2020, the company established the “Haiguang Industry Ecosystem Cooperation Organization”, abbreviated as “Light and Harmony Organization”, aiming to unite upstream and downstream enterprises, universities, research institutions, and industry enterprises around the domestic autonomous general computing platform to achieve collaborative technological breakthroughs, jointly create secure, user-friendly, and open products and solutions, and carry out a series of activities such as testing and certification, technical training, program incubation, application demonstration, and promotion and communication to promote the common development of cooperative organization members and build an inclusive and prosperous information technology ecosystem. -

The achievements of the Light and Harmony Organization have been remarkable. Currently, the organization has over 1,000 members, over 500 certified manufacturers, over 1,000 product certifications, and has established 10 regional branches and 15 adaptation centers.

3.2Ascend Ecosystem: Building full-stack AI computing, with in-depth ecosystem partners.

-

Digital China: In 2021, Digital China became one of Huawei’s first partners in Ascend computing, and according to the company’s official WeChat account, the KunTai A722 inference server based on “Kunpeng + Ascend” at its core can provide computing power for 128 processing cores within a compact 2U space, while supporting up to 8 Huawei Atlas 300 inference cards, providing 256GB inference cache and a maximum of 704 TOPS INT8 AI computational power. -

Tuowei Information: In 2021, the company became one of the first partners in Ascend, and in April 2022, the Zhaohan inference server RA2300-A series was developed based on Ascend processors, completing compatibility tests with Huawei’s Atlas 300I Pro inference card and Atlas 300V Pro video parsing card, capable of carrying up to 8 Atlas 300V Pro video parsing cards or Atlas 300I Pro inference cards.

-

The heterogeneous computing architecture CANN and corresponding drivers, runtime, acceleration libraries, compilers, debugging and tuning tools, development toolchains such as MindStudio, and various operation and maintenance management tools are open to a wide range of developers and customers; -

AI computing frameworks, including the open-source MindSpore, as well as various popular frameworks in the industry, as an organic part of the ecosystem: MindSpore’s partners include Pengcheng Laboratory, Shenzhen Bay Laboratory, Peking University, Tsinghua University, Harbin Institute of Technology, Douyu, etc.

-

AI development platforms such as ModelArts, HiAI Service, etc., with partners including Fourth Paradigm, Yitong Technology, Zhongke Hongyun, etc.

-

Changshan Beiming: According to the official account of Beiming Software, a wholly-owned subsidiary, in 2021, Beiming Software officially signed a contract with Nanjing Jiangbei New District to assist Huawei and Jiangbei New District in building the Nanjing Ascend AI Computing Center; in 2022, Beiming Software officially joined the Ascend Wanli Partner Program, becoming an application software partner of Ascend, clearly indicating comprehensive cooperation intentions in finance, internet, electricity, etc. With Huawei’s leadership and the collaboration of Huawei’s ecosystem partners, the Ascend industry ecosystem is gradually improving.

Risks of AI technology iteration not meeting expectations: If AI technology iteration does not meet expectations, and the NLP technology’s understanding of human intent does not achieve breakthroughs, it will have some adverse effects on companies related to the industry chain.

For detailed analysis, please refer to the report published on March 5, 2023, titled “Overview of Domestic AI Computing Power Ecosystem”.

Analyst Liu Gaochang Analyst ID S0680518090001

Research Assistant Sun Xingzhen Analyst ID S0680122020018

Special Statement: The “Measures for the Management of Appropriateness of Securities and Futures Investors” officially came into effect on July 1, 2017. This material produced in WeChat form is only aimed at professional investors among clients of Guosheng Securities. Please do not forward this material in any form. If you are not a professional investor among Guosheng Securities clients, to ensure service quality and control investment risks, please cancel your follow, and do not subscribe to, accept, or use any information contained in this material. As it is difficult to set access permissions for this subscription number, we apologize for any inconvenience caused! Thank you for your understanding and cooperation.

Important Statement: This subscription number is established by the computer team of Guosheng Securities. This subscription number is not a platform for publishing research reports from the Guosheng computer team. The information contained in this subscription number is only aimed at professional investment institutions and is only for timely communication of research views in the context of new media. The information contained in this subscription number is all excerpted from research reports that have been published by the Guosheng Securities Research Institute or are subsequent interpretations of published reports. In case of ambiguity arising from the excerpt from the report, the complete content of the report on the day of publication shall prevail. This material only represents the judgment on the day of the report’s release, and relevant analytical opinions and speculations may change without notice. Readers should also keep track of the latest research progress in a timely manner.

This material does not constitute a judgment or investment advice regarding specific securities at specific price points, specific times, or specific market performances. It cannot be equated with operational opinions guiding specific investments. Ordinary individual investors using this material may misunderstand key assumptions, ratings, target prices, etc., in the report due to a lack of interpretation services, thereby causing investment losses. Therefore, individual investors should seek guidance from professional investment advisors. This material is for reference only, and recipients should not rely solely on the information in this material to replace their independent judgment, and should make their own investment decisions and bear investment risks.

Copyright, reproduction or dissemination without permission is prohibited.