In July 2024, a paper from the University of Hong Kong and UCSD titled “Bunny-VisionPro: Real-Time Bimanual Dexterous Teleoperation for Imitation Learning” was published.

Teleoperation is an important tool for collecting human demonstrations, but controlling a robot with dexterous hands using two hands remains a challenge. Existing teleoperation systems struggle to handle the complexities of coordinating two hands for intricate tasks. Bunny-VisionPro is a real-time bimanual dexterous teleoperation system that utilizes VR headsets. Unlike previous vision-based teleoperation systems, it is designed for low-cost devices, providing operators with haptic feedback to enhance immersion. The system prioritizes safety by combining collision and singularity avoidance while maintaining real-time performance through design. Bunny-VisionPro outperforms previous systems on standard task suites, achieving higher success rates and shorter task completion times. Additionally, high-quality teleoperation demonstrations improve downstream imitation learning performance, thus enhancing generality. Notably, Bunny-VisionPro is capable of conducting imitation learning through challenging multi-stage, long-range dexterous manipulation tasks, which have rarely been addressed in previous work. The system can handle bimanual operations while prioritizing safety and real-time performance, making it a powerful tool for advancing dexterous manipulation and imitation learning.

Playing virtual reality (VR) games is an immersive and intuitive experience, where hand and arm movements seamlessly translate into the actions of virtual characters. Now, imagine controlling a dual-hand robot in the real world with the same ease: operators guide the robot’s movements using their own actions, just like in a VR game. This paradigm shift in robotic teleoperation opens up exciting possibilities for more intuitive and accessible human-machine interaction. Recent advancements in VR technology, such as the Apple Vision Pro, make this concept feasible.

However, translating this concept into a practical teleoperation system faces significant challenges, as performing human-like operations requires complex movements. For high degrees of freedom (DoF) arm systems, the complexity is further magnified, as operators must coordinate both arms and hands to perform tasks that require spatiotemporal synchronization. Achieving responsive control is crucial, as delays can lead to inaccurate robotic movements [1]. Moreover, ensuring safety by mitigating risks such as collisions and singularities adds another layer of complexity [2].

Teleoperation with Grippers. Classic teleoperation methods can be categorized into two main approaches based on their control objectives. The first approach, exemplified by ALOHA [5, 6, 7], uses joint space mapping in a master-slave setup [8]. Although this method can achieve impressive bimanual operation, it is robot-specific and requires motion equivalence between the master and slave robots [9]. It also places the burden of managing collisions and singularities on the human operator. The second approach prioritizes end-effector control [10], using inverse kinematics to compute the arm joint positions. Various input devices have been used, such as motion capture systems [11, 12], inertial sensors [13], and VR controllers [14, 15, 16, 17]. However, these systems often employ simple 1 or 2 DoF grippers, limiting their flexibility.

Dexterous Teleoperation. Dexterous teleoperation is challenging because it involves high degrees of freedom and complex kinematics. Glove-based systems [18, 19, 20, 21], such as the MANUS gloves used by Tesla Bot [22], can track the operator’s finger movements but are expensive and require specific hand sizes. Recent vision-based approaches, such as AnyTeleop [4], achieve dexterous arm teleoperation using cameras [3, 23, 24, 25] or VR headsets [26, 27, 28]. However, AnyTeleop requires complex GPU processing to compute arm movements and is primarily designed for single-arm operation. A concurrent work [29] uses handle buttons to control a lower DoF Ability hand, sacrificing the dexterity of the fingers to avoid relocation delays.

Imitation Learning from Demonstration. Imitation learning enables robots to mimic human behavior through expert guidance. Pioneering research utilizing deep learning [30, 31, 32, 33] has developed strategies for generating robotic control commands based on image [5, 34, 35, 36, 37, 38] and point cloud data [39, 40, 41, 42], further marking progress for bimanual systems [18, 5, 6, 43]. Additionally, recent studies have combined haptic data [44, 29] to enrich the sensory database for robotic learning. Demonstration collection is labor-intensive but crucial for effective imitation learning.

As shown in the overview of the Bunny-VisionPro system and task suite. (a) Gestures captured by Apple Vision Pro are converted into robotic motion control commands for real-time teleoperation. The robot provides sensory feedback to the operator through Vision Pro and actuator-equipped finger sleeves, including visual and haptic feedback. (b) Different short-range (left column) and long-range tasks (right column) are designed to assess teleoperation performance and its application in imitation learning.

Bunny-VisionPro is a modular teleoperation system that utilizes hand and wrist tracking capabilities of VR headsets to control high degrees of freedom bimanual robots, as shown in the figure. The system consists of three decoupled components: hand motion redirection, arm motion control, and human haptic feedback.

The hand motion redirection module maps the operator’s finger poses to the robot’s dexterous hand, enabling intuitive dexterous manipulation. Meanwhile, the arm motion control module calculates the joint angles of the robot’s arms using the operator’s wrist poses as input, taking collision avoidance and singularity handling into account. The human haptic feedback module converts the tactile readings from sensors mounted on the robot’s hand into drive signals for wearable eccentric rotating mass (ERM) actuators on the operator’s hand. This provides real-time haptic feedback, enhancing their sense of presence and allowing for more precise manipulation.

During teleoperation, coordinating the bimanual robot also requires maintaining the distance between the two robot hands to match the distance between the two operator hands, ensuring natural and coordinated movements. However, the initial distance between the robot hands may not match the distance of the human hands. To address this issue, several initialization modes are designed to dynamically align the robot’s hands with the operator’s hands when the robot begins to move. Different tasks may benefit from different initialization modes. This step creates a consistent starting point, making the execution of bimanual tasks throughout the teleoperation process intuitive and efficient.

For communication, the gesture results from VisionPro are transmitted to the computer via [45]. The modular architecture allows for better scalability and enables each module to run in separate computational processes, preventing the accumulation of delays in the system and ensuring real-time control.

Effective human manipulation relies on the integration of visual and haptic feedback. However, many vision-based teleoperation systems [4, 23, 26, 28, 3] neglect haptic feedback. To address this limitation, this paper develops an economical haptic feedback system using ERM actuators (as shown in Figure (a)). This system first processes tactile signals from the robot’s hand (Step I), then drives vibration motors to simulate haptics (Step II). Despite its low cost, the system enables operators to perceive and respond to their environment more intuitively, providing a more immersive experience that enhances manipulation performance.

Step I: Haptic Signal Processing. The Ability hand (as shown in Figure (a)) uses force-sensitive resistors (FSR) to measure finger pressure. However, FSR sensors have issues with inaccuracy and zero drift [53, 54], exacerbated by the deformable wrapping material. To resolve this, baseline FSR readings at individual joint positions are recorded and subtracted from the real-time readings during operation to achieve zero-drift calibration. This effective calibration can be automatically performed each time teleoperation is initiated. Additionally, a low-pass filter is applied to reduce noise and smooth the haptic data. As shown in Figure (b), these signal processing techniques significantly improve the quality and reliability of haptic feedback.

Step II: Vibration Motor Driving. In this step, the processed haptic signals are converted into vibrations for the ERM actuators using an ELEGOO UNO board. Since ERM motors require a constant input voltage, pulse-width modulation (PWM) is used to control the ERM vibration intensity by modulating the pulse width. To further enhance the stability of the haptic signals and the robustness of the system, a bipolar junction transistor (BJT) is added between each ERM actuator and its corresponding PWM pin. This ensures that the haptic feedback of each ERM motor is adjusted individually, activated only during the pulse width, and able to resist circuit noise.

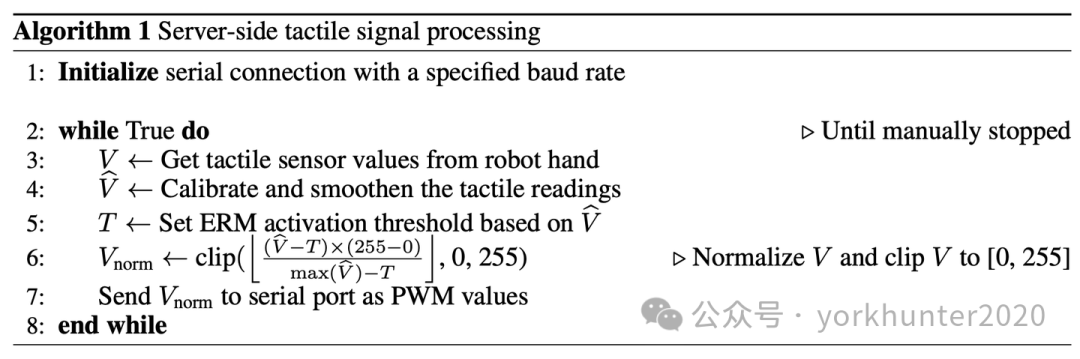

Implementing haptic feedback can be divided into two aspects: server-side signal processing and Arduino (Italian company) end motor control.

Server-Side Signal Processing. As described in Algorithm 1, the server-side involves several stages of signal processing. First, the haptic readings are calibrated, filtered, and normalized. Subsequently, these processed signals are converted into valid PWM values and transmitted to the ELEGOO UNO board. This ensures that the haptic data is accurately prepared for motor activation, contributing to precise control of haptic feedback.

Arduino-End Motor Control. As detailed in Algorithm 2, the pseudocode for Arduino-end motor control. This component of the system is responsible for parsing the PWM values received from the server via serial communication. After parsing, these values are directly mapped to the PWM pins on the board, thus controlling the ERM motors. This arrangement allows the Arduino to dynamically adjust the intensity of haptic feedback based on inputs from the server, ensuring that the haptic responses are timely and contextually appropriate.

The bimanual dexterous system consists of two UFactory xArm-7 robotic arms, each equipped with a 6 DoF Ability hand, forming a 24 DoF system. Each hand integrates 30 tactile sensors distributed over five fingertips. For demonstration collection, two RealSense L515 cameras are positioned in front and above the robot’s workspace to capture sufficient visual observations.

To evaluate the quality of demonstrations collected by AnyTeleop+ and the system from the perspective of imitation learning, several popular methods were trained: ACT [5], diffusion policy [34], and DP3 [40], and their generalization performance on unseen scenes was tested. Furthermore, the effectiveness of haptic data in imitation learning was investigated. Although the haptic feedback system proved effective, it was not utilized in the demonstration set because it was designed after data collection was completed.

Tactile signals from the fingertips of the Ability hand are integrated into the imitation learning framework to evaluate their effectiveness under various conditions. In terms of data collection, raw data from FSR sensors (used to measure the pressure applied to the robot during the target operation) undergoes zero-drift calibration and low-pass filtering similar to the haptic feedback processing. Additionally, these signals can be processed by distinguishing the forces that change over time to compute force impulses. For tactile data representation, touch signals are effectively represented as vectors, considered as components of the robot’s state, and encoded using a multi-layer perceptron (MLP). Moreover, since touch represents contact points between the robot and the target, these points are visualized as virtual point sets. They are then linked to point cloud data from the camera to enhance the visual embedding of the imitation learning strategy in the visual observation space. To highlight active signals, an additional boolean dimension is added to indicate when the tactile signal exceeds a predefined threshold: force data of 500, pulse data of 50. This representation, inspired by and adapted from DexPoint [55].

The teleoperation system assists in mapping human movements to robotic actions. For instance, moving the right hand forward by 0.1 meters should cause the robot’s right end effector to produce equivalent motion. To ensure that human movements are accurately translated into robotic actions in three-dimensional space, a coordinate system must be defined and synchronized for the human operator and the robot during the initialization step. This phase involves establishing a 3D framework for both systems, referred to as the initial human frame and the initial robot frame. The human operator’s movements relative to the initial human frame are then measured, and the robot replicates these movements within its own initial robot frame.

Bimanual teleoperation requires careful consideration of the relative positioning of the operator’s two hands, which is crucial for tasks that require complex bimanual coordination. Given that the spacing between the robot’s hands may differ from that of humans due to specific hardware configurations, it is essential to design adaptive initialization modes that can dynamically align the robot’s hands with the operator’s hands.

This paper implements ACT [5], diffusion policy [34], and 3D diffusion policy [40] to rigorously evaluate the quality of demonstrations collected by the system. To strengthen performance assessment, generalization experiments are designed, focusing on the spatial locations of targets in each task and their interactions with unseen targets.

Learning Algorithms. For the diffusion policy, multi-view images are used as visual input, establishing a view range of 8, including 2 observation steps and 6 action steps. The model was trained for 300 epochs with a batch size of 64. For the 3D diffusion policy, multi-view point clouds are used as input, maintaining the same view settings as the diffusion policy. The model was trained for 500 epochs with a batch size of 64. For ACT, multi-view images are used, with a block size set to 20, and training extended over 3000 epochs.

Generalization Evaluation Design. For generalization experiments involving different spatial locations and new targets not present in the demonstrations, the design of each task is detailed in the figure, where the blue box indicates the range of target generalization, and the green box confirms targets unseen in the demonstrations.

This spatial range encompasses a large workspace, and the sizes, colors, and shapes of unseen targets vary, providing a comprehensive assessment of the robustness of strategies learned from demonstrations.